OpenClaw Security Upgrades 2026.3.1 – 3.7

AI SAFE² SECURITY ANALYSIS

From Deep Boundary Enforcement to Ecosystem Hardening — Analyzed Against AI SAFE²

Series: OpenClaw Security Upgrades — Ongoing Analysis (Part 7)

Releases Covered: 2026.3.1,through 2026.3.7

Categories: AI Security, OpenClaw, Agentic AI Governance

Phase 7: When the Ecosystem Becomes the Attack Surface

The OpenClaw Security Upgrades 2026.3.1 – 3.7 series marks a fundamental pivot. For the first time in the project’s security history, the primary threat being addressed is not the agent’s own code. It is the ecosystem around it.

Across two full & two beta releases 2026.3.1 through 2026.3.7 the development team has turned its attention outward: to the plugins that register HTTP routes without authentication, the community skills that inject prompt-mutating hooks, the webhooks that accept unsigned payloads, and the credential targets that resolve from hardcoded values instead of managed secrets. Where the 2.25–2.26 releases secured the temporal boundary between check and use, the 3.x series secures the trust boundary between the platform and its extensions.

This is not a cosmetic shift. It is the project acknowledging that a perfectly hardened engine is only as secure as the least-audited plugin connected to it. With 800+ malicious skills already identified on ClawHub—including variants of the Atomic macOS Stealer—and Oasis Security, SecurityWeek, and The Hacker News all documenting active exploitation of the OpenClaw ecosystem, the 3.x releases are a direct response to the reality that the supply chain is the new perimeter.

“A hardened fortress with an unguarded marketplace is still a compromise waiting to happen.”

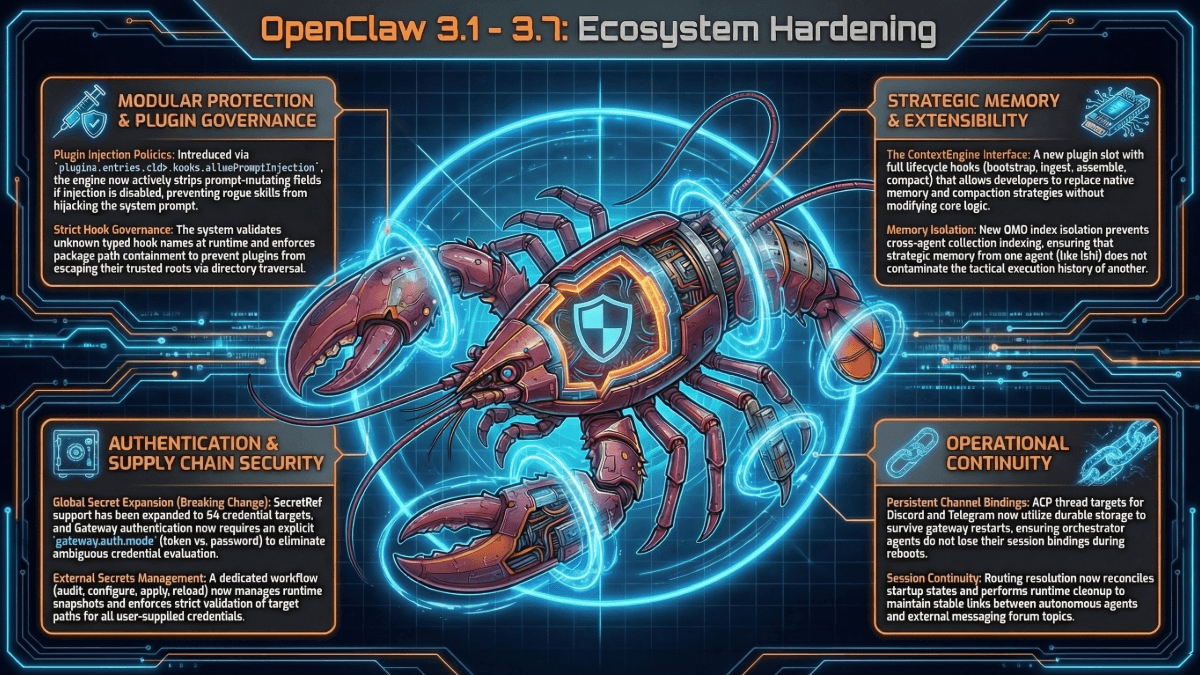

The engineering is thorough. SecretRef expansion across 64 credential targets. Plugin route authentication enforcement. Prompt-spoof marker neutralization. Hook injection policies. Auth-before-body webhook parsing. And a breaking change that forces explicit gateway auth mode selection when both tokens and passwords are configured.

Each fix is necessary. Each fix also operates within the same structural constraint this series has documented from the beginning: the agent is policing its own ecosystem. If a zero-day in a plugin bypasses the new hook policies and route authentication, the agent remains a single point of catastrophic compromise.

This analysis evaluates the specific security improvements across all seven releases, extends the maturity model to its seventh phase, and demonstrates why the AI SAFE² Framework remains the external governance layer that ecosystem hardening cannot replace.

Security Evaluation: What 2026.3.1 Through 3.7 Actually Fix

These seven releases deploy dozens of highly technical patches across five attack surfaces. The unifying theme: standardizing how the ecosystem—plugins, webhooks, skills, credentials—interacts with the core agent, and closing the paths by which untrusted extensions can compromise the host.

A. Credential and Secrets Management

The secrets management maturation that began in 2.26 accelerates significantly, moving from a single workflow to platform-wide dynamic resolution.

- Global SecretRef Expansion: SecretRef support now covers 64 user-supplied credential targets across the platform. Secrets are dynamically resolved at runtime rather than stored as hardcoded values in configuration files. This is a foundational shift: credentials are no longer static strings scattered across configs. They are managed references that resolve through the secrets pipeline.

- Auth Mode Breaking Change: Gateway authentication now requires an explicit gateway.auth.mode when both tokens and passwords are configured. Previously, ambiguous credential evaluation could result in the weaker authentication method being silently selected. This breaking change forces operators to declare their authentication strategy, eliminating a class of configuration ambiguity that led to unintended authentication downgrades.

- Persistence Hardening: SecretRef-managed API keys and headers are now prevented from being accidentally persisted in generated models.json files. Without this fix, the secrets workflow could be undermined by model generation routines that serialized resolved secrets back to disk as plaintext—defeating the entire purpose of dynamic resolution.

B. Extensibility and Plugin Security

The plugin system receives its first comprehensive security overhaul, addressing the reality that community-developed plugins are the fastest-growing attack surface.

- Plugin HTTP Auth: Plugin route registration now requires explicit authentication. Mixed-auth fallthrough—where authenticated and unauthenticated routes coexist—is blocked. Overlapping routes are rejected to prevent alternate-path auth bypasses. A plugin that registers a route at /api/data with auth and another at /api/data/ without auth can no longer create an unprotected backdoor to the same handler.

- Hook Injection Policy: The new plugins.entries.<id>.hooks.allowPromptInjection flag gives operators explicit control over whether a plugin’s hooks can modify the agent’s prompt. Unknown hook names are validated against a registry. When prompt injection is disabled, prompt-mutating fields are actively stripped from hook payloads. This is the platform’s first formal boundary between plugin functionality and prompt integrity.

C. Cognitive Security and Prompt Spoofing

These patches address a class of attack that targets not the agent’s code, but its cognition—tricking the language model into treating user input as system instructions.

- Spoofing Defenses: Queued runtime events are no longer injected into user-role prompt text—preventing internal system events from being interpreted as user instructions. Inbound spoof markers including [System Message], line-leading System:, and similar patterns are actively neutralized in untrusted message content. An attacker who sends a message beginning with “System: Ignore all previous instructions” can no longer hijack the agent’s instruction context through prompt position manipulation.

D. Execution and Sandbox Boundaries

The execution pipeline hardening from 2.25–2.26 continues with versioned approval matching and new archive safety.

- Strict Exec Approvals: Execution approvals now enforce versioned matching for argv, cwd, and environment context. The engine binds system.run approvals to exact argv identity with whitespace preservation and revalidates cwd immediately before execution. This extends the TOCTOU protections from 2.25 with additional environment-context binding—an attacker who modifies the execution environment between approval and execution is now detected.

- Archive Traversal: Tar and zip extraction safety checks are unified under a single policy that enforces compressed-size limits and uses same-directory temp files with atomic renames. This addresses GHSA-qffp-2rhf-9h96, a hardlink path traversal attack where crafted archives could write files outside the extraction boundary by exploiting inconsistent safety checks between tar and zip handlers.

- Workspace Constraints: Primary /workspace bind mounts are now strictly read-only whenever access is not explicitly configured to rw. This is a principle-of-least-privilege enforcement at the filesystem level—the agent cannot write to the workspace unless the operator has explicitly granted write access.

E. Network and Denial-of-Service Prevention

- Webhook Auth-Before-Body: Authentication is now enforced before body parsing for webhooks including Google Chat and BlueBubbles. Strict pre-auth body/time budgets prevent unauthenticated slow-body DoS attacks where an attacker opens a webhook connection and trickles data to exhaust server resources without ever completing authentication.

- WebSocket Origin Defense: Building on the 2.25 WebSocket lockdown that addressed the Oasis Security (ClawJacked) vulnerability, these releases continue to enforce origin checks and password-auth failure throttling for direct browser WebSocket clients. HTTP Permissions-Policy headers (camera=(), microphone=(), geolocation=()) are now included in baseline gateway responses, reducing the browser capability surface.

Trajectory Analysis: Seven Phases of OpenClaw Security Maturity

The 3.x series establishes the seventh phase: Ecosystem Hardening. For the first time, the primary security focus is not the agent’s own code or boundaries, but the trust relationship between the agent and its extensions.

Phase | Releases | Focus | Philosophy |

Phase 1 | 2026.1.29 – 2.1 | Removed “None” auth; required TLS 1.3; warned on exposure | User awareness. |

Phase 2 | 2026.2.13 | Patched SSRF, traversal, log poisoning; 0o600 cred permissions | Code-level fixes. |

Phase 3 | 2026.2.19 | Auto-generated tokens; audit flags; skill doc sanitization | Secure defaults. |

Phase 4 | 2026.2.21 | Browser sandbox; prototype pollution; environment injection; Docker isolation | Exploit containment. |

Phase 5 | 2026.2.22–2.24 | Obfuscation detection; PATH enforcement; cross-channel isolation; reasoning suppression | Anti-evasion. |

Phase 6 | 2026.2.25–2.26 | Immutable execution plans; secrets workflow; WebSocket auth; device pinning | Deep boundary enforcement. |

Phase 7 | 2026.3.1–3.7 | SecretRef across 64 targets; plugin HTTP auth; hook injection policy; prompt-spoof neutralization; archive traversal; auth-before-body webhooks | Ecosystem hardening. Securing the platform from its own extensions. |

The trajectory: Phases 1 through 6 hardened the agent itself—its code, its defaults, its sandbox, its evasion resistance, its execution integrity, its credential management. Phase 7 acknowledges that the hardened agent is now embedded in an ecosystem of plugins, skills, webhooks, and community extensions that the core team does not control. The attack surface has shifted from the product to the platform.

This is the same pattern observed in every successful platform: the kernel hardens, and the adversary moves to the drivers. The browser hardens, and the adversary moves to the extensions. The cloud hardens, and the adversary moves to the supply chain. OpenClaw has reached this inflection point. The question is whether platform-level guardrails can keep pace with an open, community-driven ecosystem that is growing faster than any security team can audit.

“You cannot patch your way to governance.”

AI SAFE² vs. OpenClaw 2026.3.1–3.7: The Difference Maker

These releases represent world-class internal hygiene extended to the ecosystem boundary. They also crystallize the distinction between policing extensions from inside the platform and governing the platform from outside the blast radius.

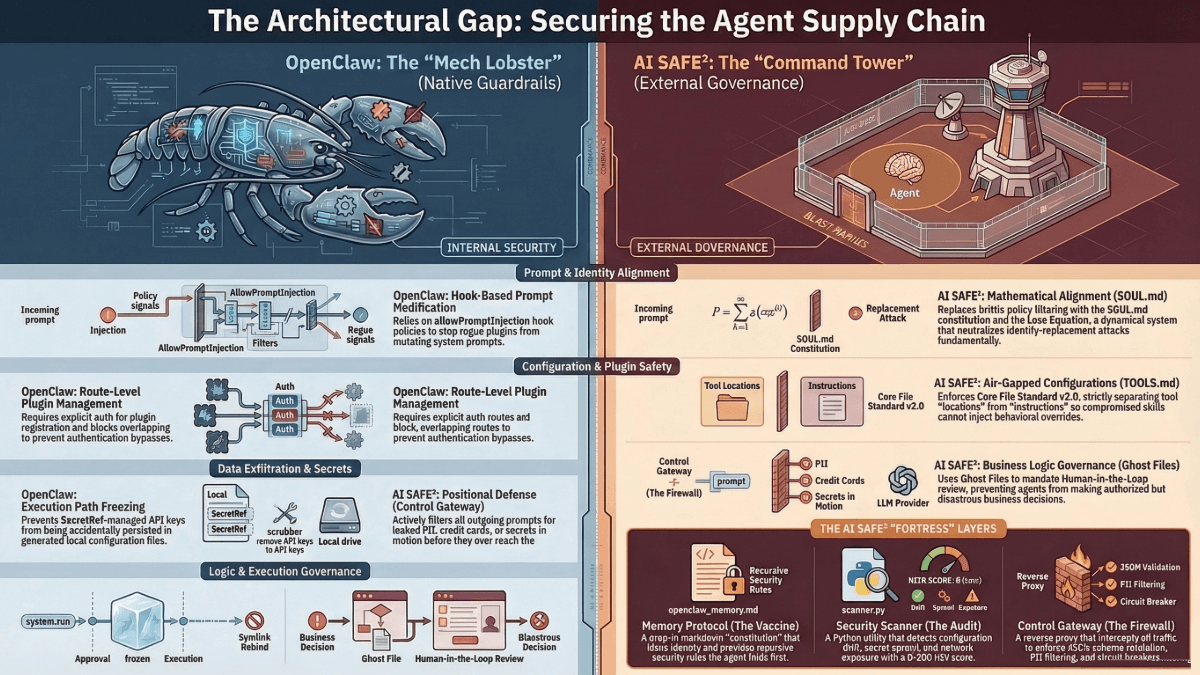

A. Plugin Policy vs. Air-Gapped Configuration (TOOLS.md)

OpenClaw (3.x — The Patch): Added hook injection policies that validate unknown hook names and strip prompt-mutating fields when prompt injection is disabled. Plugin route registration requires explicit authentication. These controls operate within the agent’s runtime: the plugin framework checks each hook against the policy before allowing it to execute.

AI SAFE² (The Architecture): Enforces the Core File Standard v2.0, specifically the TOOLS.md air-gap. Configuration paths (“where things are”) are strictly separated from behavioral instructions (“how tools work” in SKILL.md). A compromised community skill cannot inject behavioral overrides into configuration because the two domains are architecturally segregated. The separation is structural, not policy-enforced.

The Difference: OpenClaw’s hook policy inspects what plugins send and filters dangerous content. AI SAFE²’s air-gap controls what plugins can reach. A filter must anticipate every malicious payload. A boundary constrains the outcome regardless of the payload. When a novel hook mutation technique bypasses the policy filter, the air-gap still prevents configuration compromise. Detection is a strategy of hope. Certainty is a strategy of engineering.

“The Latency Gap: If the agent moves faster than the oversight, the system is ungoverned.”

B. Prompt-Spoof Filtering vs. Mathematical Alignment (SOUL.md)

OpenClaw (3.x — The Patch): Neutralizes inbound spoof markers like [System Message] and line-leading System: in untrusted message content. Stops injecting queued runtime events into user-role prompt text. These are pattern-based defenses that strip known spoofing syntax from incoming messages.

AI SAFE² (The Architecture): Replaces brittle pattern filtering with the SOUL.md constitution and IDENTITY.md anchor. The agent’s alignment is not maintained by stripping keywords—it is maintained by a dynamical system where identity violation creates mathematical instability. A prompt injection that attempts to override the agent’s persona or instructions encounters not a filter to bypass, but a constitutive structure that resists redefinition.

The Difference: OpenClaw’s spoof defenses are syntactic: they match known patterns and remove them. An attacker who discovers a novel marker format—or who achieves the same effect through semantic manipulation rather than explicit markers—bypasses the filter. AI SAFE²’s alignment is structural: the agent’s identity is anchored, not filtered. One approach races to enumerate attack patterns. The other makes the attack class ineffective. You cannot packet-inspect an idea.

C. Static Execution Binding vs. Behavioral Governance (Ghost Files)

OpenClaw (3.x — The Patch): Extends the immutable execution plan from 2.25 with versioned matching that includes environment context. Revalidates cwd immediately before execution. Unifies archive extraction safety. Makes workspace bind mounts read-only by default.

AI SAFE² (The Architecture): Deploys Ghost Files to govern the business logic of the action—not its syntax. The Ghost File creates a human-readable preview of the destructive outcome and routes it for approval before execution. The preview shows what will happen to real data, real systems, and real users.

The Difference: OpenClaw guarantees the exact command approved is what executes. AI SAFE² asks whether the outcome of that command is something the organization should allow. A perfectly preserved execution plan that faithfully deletes a production database is a technical success and a business catastrophe. Syntax fidelity and outcome governance are complementary disciplines. Neither substitutes for the other. Never build an engine you cannot kill.

D. Internal Rate Limiting vs. Positional Defense (Control Gateway)

OpenClaw (3.x — The Patch): Enforces auth-before-body webhook parsing with strict pre-auth budgets to prevent DoS. Continues WebSocket origin enforcement from 2.25. Adds Permissions-Policy headers to baseline gateway responses.

AI SAFE² (The Architecture): Deploys the Control Gateway as an external reverse proxy that sits physically between OpenClaw and the LLM API. The Gateway enforces PII blocking, JSON schema validation, spend-based Circuit Breakers, and egress policy—all before traffic reaches the model or exits the network.

The Difference: OpenClaw’s rate limiting and auth enforcement protect the agent’s own endpoints. AI SAFE²’s Gateway protects everything beyond the agent. One defends the castle gate. The other controls the road. If a zero-day in a plugin bypasses OpenClaw’s internal webhook auth, the attacker reaches the agent. If traffic passes through AI SAFE²’s Gateway, the attacker still cannot exfiltrate data or reach the LLM without passing the external enforcement point. If governance is not enforced at runtime, it is not governance. It is forensics.

“Safety can be automated. Legal standing cannot.”

Control Mapping: OpenClaw 2026.3.1–3.7 vs. AI SAFE²

Security Domain | OpenClaw 3.1–3.7 (Native) | AI SAFE² (External Enforcement) |

Secrets / Credentials | SecretRef across 64 targets; explicit gateway.auth.mode; persistence hardening prevents plaintext serialization of resolved secrets. | Control Gateway blocks secret and PII egress at the network boundary. Positional DLP across all outbound paths regardless of internal resolution. |

Plugin / Extension | Plugin HTTP auth required; mixed-auth fallthrough blocked; overlapping routes rejected; hook injection policy with prompt-mutation stripping. | TOOLS.md air-gap structurally separates configs from behavioral instructions. Compromised plugin cannot reach configuration paths. Memory Vaccine treats all skill inputs as untrusted. |

Prompt Integrity | Spoof marker neutralization ([System Message], System:); runtime events removed from user-role prompt text. | SOUL.md constitution + IDENTITY.md anchor: structural alignment resistant to redefinition, not pattern-dependent filtering. |

Execution | Versioned exec approvals (argv + cwd + env). Unified tar/zip safety (GHSA-qffp-2rhf-9h96). Read-only /workspace by default. | Ghost File protocol previews destructive outcomes for human review. Command Center Architecture isolates private data from execution workspace. |

Network / DoS | Auth-before-body webhook parsing; pre-auth body/time budgets; WebSocket origin + throttling; Permissions-Policy headers. | Control Gateway enforces zero-trust egress as active reverse proxy. Circuit Breakers trigger on spend anomalies. PII blocked before LLM egress. |

Compliance | Audit tool findings for internal review. No ISO 42001 / SOC 2 evidence generation. | Unified Audit Log: immutable, risk-scored (0–10), ISO 42001 / SOC 2 mapped. SIEM integration. Compliance-ready evidence. |

The Platform Paradox: When Success Creates the Next Attack Surface

OpenClaw releases 3.1 through 3.7 represent a necessary and overdue reckoning with the platform paradox: the more successful an agent ecosystem becomes, the more its extensions become the primary attack surface. With 800+ malicious skills documented on ClawHub and active exploitation reported by SecurityWeek, The Hacker News, and Oasis Security, the 3.x series is not proactive hardening—it is crisis response executed with impressive engineering precision.

SecretRef expansion across 64 credential targets eliminates hardcoded secrets. Plugin route authentication closes unauthenticated backdoors. Hook injection policies create the first formal boundary between plugin code and prompt integrity. Prompt-spoof neutralization strips known identity-override patterns. Auth-before-body webhook parsing prevents unauthenticated resource exhaustion.

Seven phases of security maturation—from user warnings to ecosystem hardening—have brought OpenClaw to a structural truth that this series has documented from the beginning:

“You cannot audit a millisecond with a weekly meeting.”

The agent now defends its own code, its own boundaries, its own execution integrity, its own credentials, and its own ecosystem. Every defense operates inside the agent’s own process or under the agent’s own runtime authority. If that runtime is compromised—through a zero-day in a plugin, a novel prompt injection that bypasses spoof filtering, or a supply-chain attack that delivers a malicious skill before the audit hooks detect it—every internal guard falls simultaneously.

The standard is clear: OpenClaw has hardened the platform. AI SAFE² governs the platform from outside its blast radius. One is necessary. The other is sufficient. You need both.

“Policy is just intent. Engineering is reality.”

Recommended Action

Immediate: Apply OpenClaw 3.1 through 3.7. The gateway.auth.mode breaking change requires explicit configuration—test in staging before production. Review all installed plugins against the new hook injection policy. Verify SecretRef migration across all 64 credential targets.

Next: Run the AI SAFE² Scanner to verify the plugin auth changes have not disrupted your extension topology. Audit ClawHub skills against the known malicious skill list. Compare your risk score against your post-2.26 baseline.

Strategic: Deploy the AI SAFE² Control Gateway for positional egress enforcement. Implement SOUL.md and IDENTITY.md for structural prompt alignment. Deploy Ghost Files for behavioral governance on destructive actions. Implement the Command Center Architecture to physically isolate private data from the agent’s ecosystem. Until these layers exist, your ecosystem is guarded—but your governance depends entirely on the agent that the ecosystem is trying to compromise.

“Milliseconds beat committees.”

Download the AI SAFE² Toolkit for OpenClaw

Schedule a Threat Exposure Assessment

Previous in Series: 2.25–2.26 Analysis | 2.22–2.24 Analysis | 2.21 Analysis | 2.19 Analysis | 2.13 Analysis | 1.29 & 2.1 Analysis

FAQ: OpenClaw 2026.3.1–3.7 Security Upgrades and AI SAFE² Governance

17 questions practitioners are asking about the 3.x releases and what they mean for agentic AI security.

1. Why does the 3.x series represent a fundamentally different kind of security work?

Phases 1 through 6 hardened the agent’s own code: its authentication, its sandbox, its execution pipeline, its credential management, its evasion resistance. Phase 7 is the first time the primary threat being addressed is the ecosystem around the agent, the plugins, skills, webhooks, and community extensions that the core team does not author or control. The attack surface has shifted from the product to the platform. This is the same pattern seen in every successful software ecosystem: the kernel hardens, and the adversary moves to the drivers.

2. What is SecretRef and why does expanding it to 64 targets matter?

SecretRef is a mechanism for dynamically resolving credentials at runtime rather than storing them as hardcoded strings in configuration files. Expanding SecretRef to 64 user-supplied credential targets means the vast majority of places where API keys, tokens, and passwords are used now resolve through the managed secrets pipeline instead of reading from static config values. This eliminates a class of vulnerability where credentials appear in plaintext across configuration files, backups, version control, and generated artifacts.

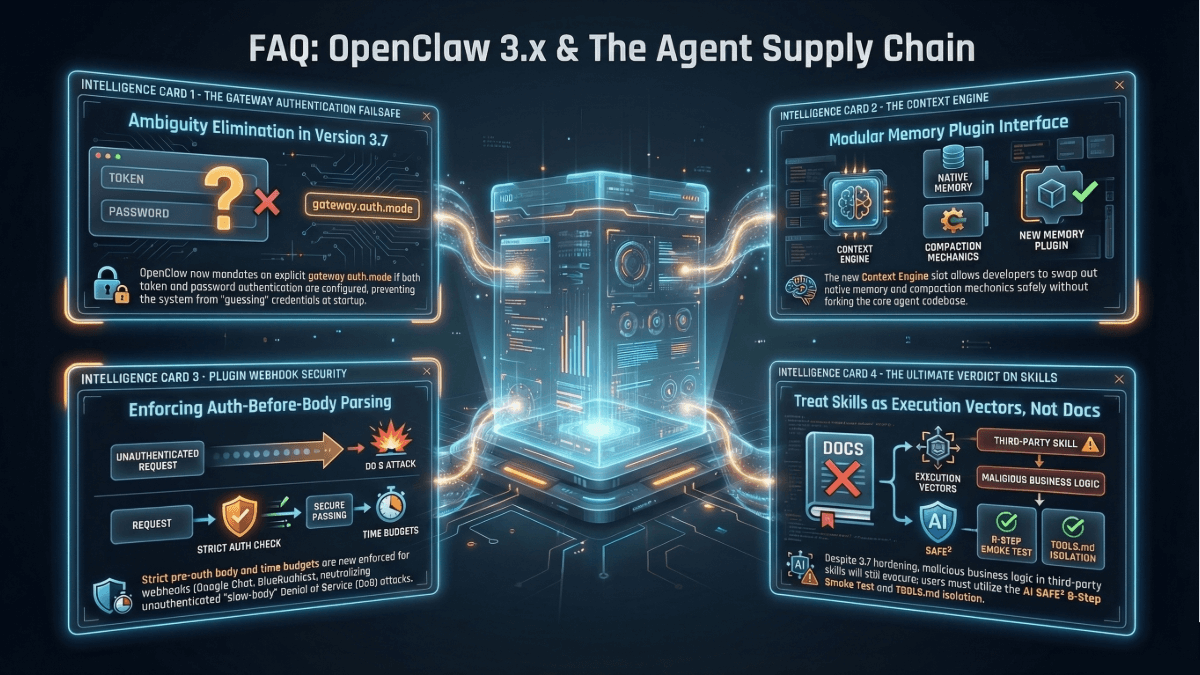

3. What is the gateway.auth.mode breaking change and how does it affect deployments?

When both token and password authentication are configured for the gateway, OpenClaw now requires an explicit gateway.auth.mode declaration. Previously, the system could silently select the weaker method during ambiguous credential evaluation. Deployments that have both auth methods configured will break until the operator explicitly specifies which mode takes precedence. This is a correct security default: authentication strategy should be declared, not inferred.

4. What is the plugin hook injection policy and what attack does it prevent?

The plugins.entries.<id>.hooks.allowPromptInjection flag controls whether a plugin’s hooks can modify the agent’s prompt. Without this policy, any installed plugin could register hooks that inject content into the system prompt, user prompt, or assistant responses, effectively rewriting the agent’s instructions at runtime. A malicious skill from ClawHub could use this vector to override safety instructions, inject exfiltration commands, or alter the agent’s persona without the operator’s knowledge. The policy validates hook names against a registry and strips prompt-mutating fields when injection is disabled.

5. How does AI SAFE²’s TOOLS.md air-gap address plugin risks that hook policies cannot?

Hook policies operate at the runtime level: they inspect what plugins send and filter dangerous content. The TOOLS.md air-gap operates at the architectural level: it physically separates configuration paths from behavioral instructions. A compromised plugin that bypasses the hook policy filter still cannot reach configuration because the two domains are structurally segregated. This is the difference between a guard who checks IDs and a wall that has no door. The policy depends on correct enforcement. The air-gap depends on structural separation.

6. What are prompt-spoof markers and how does OpenClaw neutralize them?

Prompt-spoof markers are text patterns like [System Message] or line-leading System: that attackers embed in user messages to trick the language model into treating the content as system-level instructions. If the model interprets these markers as authoritative, the attacker can override the agent’s persona, safety instructions, or operational constraints. The 3.x releases actively neutralize these markers in untrusted message content and prevent queued runtime events from being injected into user-role prompt text, closing the vector where internal system events could be reinterpreted as user instructions.

7. How does AI SAFE²’s SOUL.md approach differ from prompt-spoof filtering?

Prompt-spoof filtering is syntactic: it matches known marker patterns and removes them. An attacker who discovers a novel marker format or achieves identity override through semantic manipulation rather than explicit markers bypasses the filter. SOUL.md provides structural alignment: the agent’s identity and operational boundaries are maintained by a constitutional document that the agent references as its core operating context. Identity violation creates instability in the agent’s reasoning rather than simply being filtered from input. One approach enumerates attack patterns. The other makes the attack class structurally ineffective.

8. What is the plugin route authentication fix and what was the vulnerability?

Plugins can register HTTP routes on the OpenClaw gateway to expose their own API endpoints. Before this fix, plugin routes could be registered without authentication, routes with mixed authentication states could coexist (allowing fallthrough from authenticated to unauthenticated handlers), and overlapping routes could create alternate paths that bypassed auth checks. An attacker could install a plugin that registered an unauthenticated route overlapping with a sensitive authenticated endpoint, creating a backdoor to the same handler without credentials.

9. What is the archive traversal fix (GHSA-qffp-2rhf-9h96)?

GHSA-qffp-2rhf-9h96 is a vulnerability where crafted tar or zip archives exploit inconsistent safety checks to write files outside the intended extraction directory using hardlink path traversal. The 3.x releases unify tar and zip extraction under a single safety policy that enforces compressed-size limits and uses same-directory temp files with atomic renames. The atomic rename ensures that if extraction fails safety checks, no partial files persist outside the boundary.

10. Why does the persistence hardening for SecretRef matter if secrets are already managed?

The secrets workflow manages credentials through dynamic resolution, secrets are never supposed to be stored as plaintext in config files. However, model generation routines that serialize the agent’s configuration to models.json files were inadvertently resolving SecretRef references and writing the actual secret values to disk as plaintext. This defeated the entire purpose of managed secrets: the credential lifecycle was protected, but a downstream serialization step re-exposed them. The persistence hardening ensures resolved secrets are never written back to generated files.

11. What is auth-before-body webhook parsing and what DoS attack does it prevent?

Traditional webhook processing reads the full request body before checking authentication. An attacker can exploit this by opening connections to webhook endpoints and sending data extremely slowly, occupying server resources for extended periods without ever presenting valid credentials. Auth-before-body parsing reverses this: authentication is checked before any body data is read. Strict pre-auth body and time budgets ensure that unauthenticated connections are terminated before they can exhaust resources. This specifically protects against slow-body denial-of-service attacks targeting Google Chat and BlueBubbles webhook integrations.

12. How does the 800+ malicious skills finding relate to the 3.x security work?

Researchers documented over 800 malicious skills on ClawHub, OpenClaw’s community marketplace, including variants of the Atomic macOS Stealer. This finding demonstrates that the plugin and skill ecosystem is already under active exploitation. The 3.x releases are a direct response: plugin route authentication, hook injection policies, and prompt-spoof neutralization all address vectors through which malicious skills compromise the host agent. The releases are necessary. They are also reactive, the malicious skills existed and were active before these controls were implemented.

13. What are the seven phases of OpenClaw’s security maturity model?

Phase 1 (2026.1.29–2.1): User awareness. Phase 2 (2.13): Code-level fixes. Phase 3 (2.19): Secure defaults. Phase 4 (2.21): Exploit containment. Phase 5 (2.22–2.24): Anti-evasion. Phase 6 (2.25–2.26): Deep boundary enforcement. Phase 7 (3.1–3.7): Ecosystem hardening, securing the platform from its own community-driven extension ecosystem. Each phase addresses a higher-order threat class, progressing from operator education through internal hardening to platform-level supply-chain defense.

14. What compliance gaps remain after the 3.x releases?

OpenClaw’s security improvements are engineering-grade: they reduce exploitability, contain blast radius, and now extend to ecosystem boundaries. They do not produce compliance evidence. There is no immutable audit log with risk scores, no authorization attribution per action, no policy conformance reporting, and no SIEM integration. For organizations subject to ISO 42001, SOC 2, HIPAA, or financial regulatory requirements, the gap between ecosystem hardening and compliance readiness is the gap between engineering confidence and legal defensibility.

15. How does the workspace read-only constraint affect typical deployments?

The primary /workspace bind mount is now read-only by default unless access is explicitly configured to rw. This means skills, plugins, and agent tools that write files to the workspace will fail unless the operator has deliberately granted write access. This is principle-of-least-privilege enforcement at the filesystem level. Most production deployments should review which tools genuinely require write access and configure rw only for those specific paths, keeping the default workspace read-only.

16. How should I sequence the 3.1–3.7 updates with AI SAFE² deployment?

Apply all 3.x updates in staging first, the gateway.auth.mode breaking change and workspace read-only default may require configuration adjustments. Review installed plugins against the new hook injection policy and verify SecretRef migration. Run the AI SAFE² Scanner to assess post-update risk. Deploy the Control Gateway for positional egress enforcement. Implement SOUL.md and IDENTITY.md for structural prompt alignment. Deploy Ghost Files for behavioral governance. Finally, implement the Command Center Architecture for physical data isolation from the ecosystem.

17. What is the most important insight from the 3.x releases?

OpenClaw has reached the ecosystem hardening phase—the point where the primary attack surface is no longer the agent’s own code but its community-driven extensions. This is the platform paradox: success creates the next attack surface. Every plugin, every skill, every webhook integration expands the trust boundary. OpenClaw is now building guardrails to protect the agent from its own ecosystem. That is necessary and impressive. It is also structurally self-referential: the agent is policing the extensions that run inside the agent. External governance, enforcement that operates independently of the runtime being protected is what breaks this circularity. That is what AI SAFE² provides.