OpenClaw Security Upgrades Compared Against AI SAFE²

The “Patch vs. Policy” Dilemma: Why Code Fixes Aren’t Enough for Agentic AI

Open Openclaw security items (from recent releases)

From the last three releases, Openclaw has made strong progress but still leaves responsibility for safe deployment and operation largely to the operator.

OpenClaw Releases https://github.com/openclaw/openclaw/releases

Key remaining or only-partially-addressed areas:

- Secure exposure by default: 2026.1.29 and 2026.2.1 improve gateway auth, warn on unauthenticated exposure, and require TLS 1.3, but they still assume you will correctly deploy and network-isolate gateway/control UI yourself.

- Prompt-injection and tool-abuse controls: there are system-prompt guardrails, tool policy snapshots, exec allowlists, and hardened web tool/file parsing, but they are mostly static configuration and code-level guardrails, not a unified runtime risk model that scores and blocks multi-step tool sequences.

- Cross-channel identity and allowlists: Matrix, Slack, Telegram, Twitch, WhatsApp, etc., receive incremental hardening (full MXIDs, allowlists, media fetch limits, path-traversal fixes), but policy remains channel-specific and scattered instead of governed by a single external policy engine.

- Memory and log sensitivity: recent releases add QMD workspace memory backend and improve memory search, plus multiple LFI/SSRF guards and media-path sanitization, but secret redaction and “no-secrets-out” behavior are not centrally enforced across every path (memory, logs, tools, gateways).

- Human-in-the-loop for high‑risk actions: Openclaw has operator approvals for some gateway commands, exec-approval docs, and exec safeBins, but there is no mandatory external approvals layer that a compromised Openclaw instance cannot bypass.

- Audit and compliance posture: there are audit notes, gateway warnings, and docs for private deployments, but SOC 2 / ISO 42001 style evidence (central immutable audit logs, risk scores, and policy conformance reports) are not produced automatically by Openclaw alone.

In practice, many of the most serious Openclaw incidents in the wild are driven by misconfiguration (0.0.0.0 binding, weak/no gateway auth, API keys in logs, over-permissive tools) rather than missing code-level patches; those risks are still easy to reintroduce with each upgrade or re-deploy.

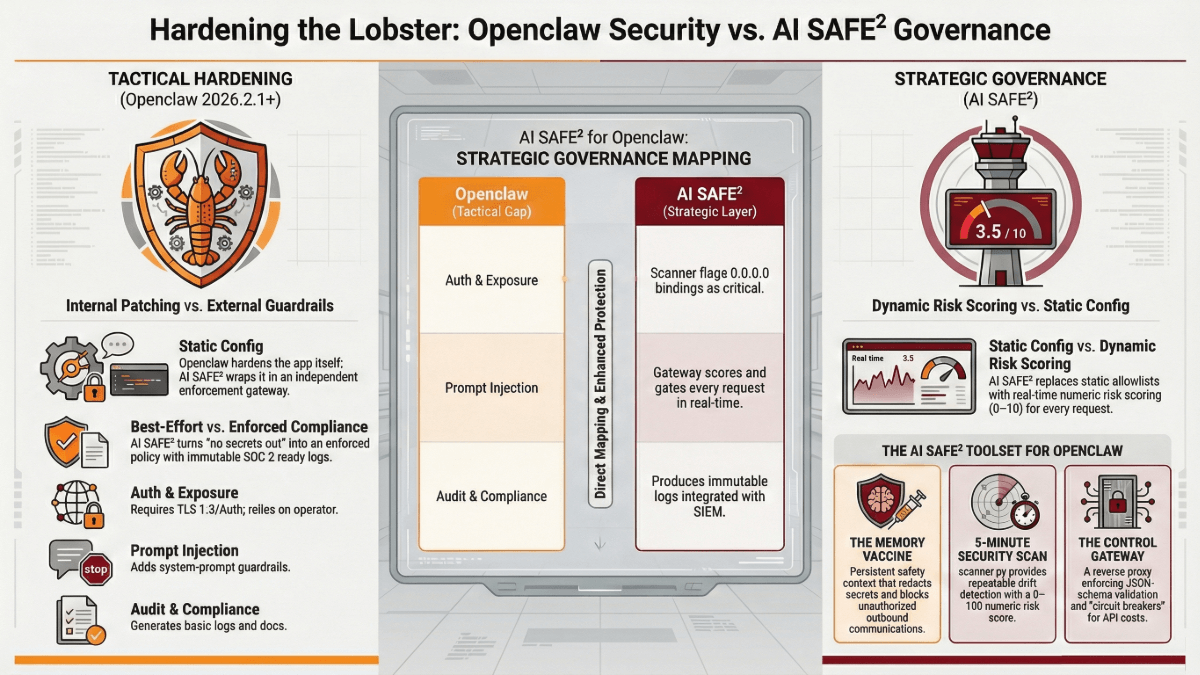

AI SAFE² Openclaw controls (what it adds)

The AI SAFE² Openclaw example packages three layers: a memory protocol, a local scanner, and an external control gateway.

AI SAFE2 OpenClaw Tools: https://github.com/CyberStrategyInstitute/ai-safe2-framework/tree/main/examples/openclaw

Major capabilities:

- Memory protocol (

openclaw_memory.md): persistent “always-on” safety context for Openclaw that blocks external communications without explicit approval, detects prompt injection attempts, redacts secrets from outputs, and requires human approval for high‑risk actions. - Security scanner (

scanner.py): quick audit that checks for network exposure (e.g., 0.0.0.0 bindings), world-readable configs, dangerous tools (exec, browser) being enabled, log redaction being disabled, and missing audit logs, and then outputs a 0–100 risk score with concrete remediation steps. - Control gateway (

gateway/): a reverse proxy that sits between Openclaw and the Anthropic API enforcing JSON schema validation, prompt-injection blocking, high‑risk tool denial, immutable audit logging, fine-grained risk scoring (0–10), and safety “circuit breakers.” - Deployment paths: quick paths for individual users, power users, and enterprise teams, with guidance for integrating with SIEM and access controls to reach a compliance-ready state.

Crucially, AI SAFE² is intentionally outside Openclaw: it wraps the system as an independent enforcement layer, so even if Openclaw is compromised or misconfigured, the external policy and gateway still mediate high‑risk behavior.

How AI SAFE² maps to recent Openclaw changes

The table below shows how the last two Openclaw releases line up against AI SAFE² controls.

| Area | Openclaw 2026.2.1 / 2026.2.2 | AI SAFE² for Openclaw |

|---|---|---|

| Gateway auth & exposure | Requires auth (no “none” mode), requires TLS 1.3, warns on unauthenticated gateway and Control UI exposure, but relies on operator to deploy and monitor correctly. | Scanner flags 0.0.0.0 bindings and missing auth as critical findings, and the AI SAFE² gateway is designed to run on localhost or behind strong auth with explicit guidance for safe deployment. |

| Channel allowlists & identity | Multiple per-channel fixes (Matrix full MXIDs, Slack/Twitch allowlists, Telegram thread handling) reduce spoofing/overreach on a per-integration basis. | Enforces sender and tool policies at the gateway level with JSON-schema validation and risk scoring, so channel-specific weaknesses are caught before hitting the model/tools. |

| Prompt injection & system prompts | Adds system-prompt safety guardrails and some tool policy conformance checks, but these rely on in-app configuration and do not score or gate every request. | Gateway performs prompt-injection checks and risk scoring for each request, while the memory protocol continuously reminds the model to treat tools, plugins, and external text as untrusted unless verified. |

| Exec/tool abuse | Hardened exec allowlists, LD*/DYLD* env blocking, lobsterPath injection fix, and safer browser/tool wrappers all reduce specific exploit classes. | The gateway can deny or require human approval for high‑risk tools (exec, browser, filesystem) altogether, independent of Openclaw’s internal configuration, and logs every attempted use immutably. |

| Memory & log safety | Introduces QMD workspace memory, fixes LFI/SSRF and media-path issues, and updates session-log paths, but does not centrally guarantee secret redaction in all outputs/logs. | Memory protocol explicitly redacts secrets from outputs and the scanner checks for log redaction being disabled, turning “no secrets out” into an enforced policy rather than a best effort. |

| Misconfig detection | Openclaw ships “doctor” style warnings and better docs for private deployments, but configuration drift is still easy. | scanner.py provides repeatable configuration drift detection with a numeric risk score and remediation guidance, so teams can run it after each upgrade or infra change. |

| Audit & compliance | Gateway and docs generate some logs and guidance, but there is no complete SOC 2 / ISO 42001 story out-of-the-box. | Control gateway produces immutable audit logs and integrates with SIEM, and the enterprise path focuses on access controls and compliance reporting specifically for Openclaw + AI SAFE². |

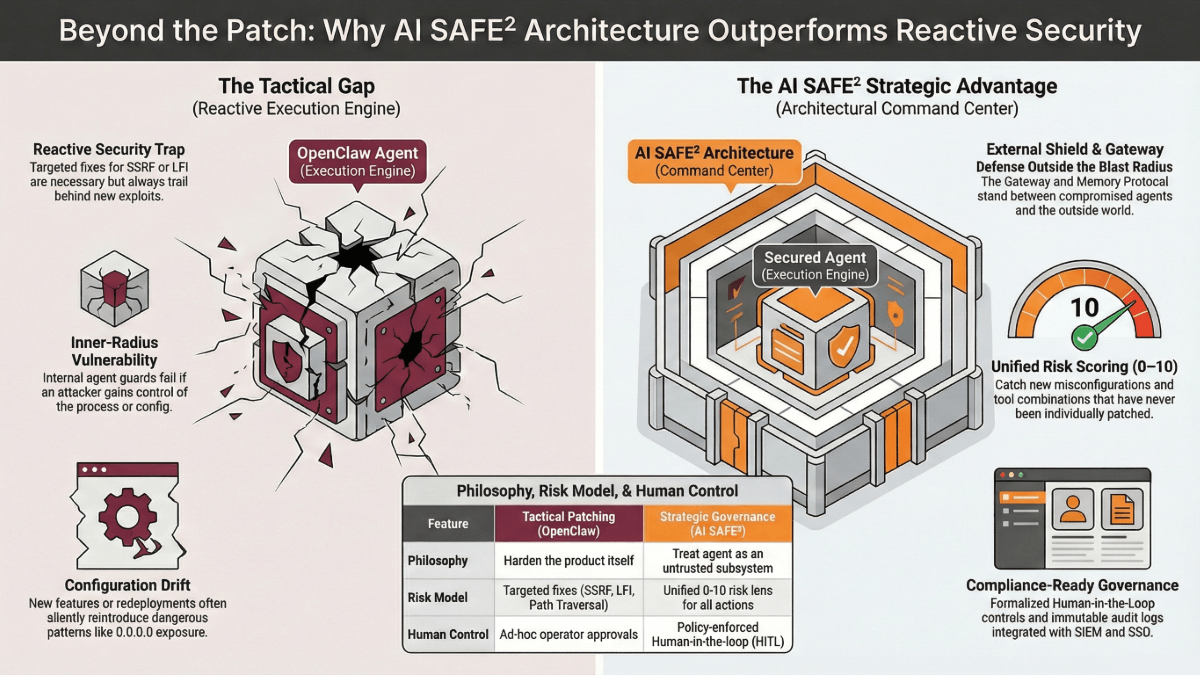

How AI SAFE² addresses current open issues better than “just patch faster”

In terms of security philosophy, Openclaw’s recent releases harden the product itself, whereas AI SAFE² treats Openclaw as an untrusted subsystem inside a broader AI-safety architecture.

AI SAFE² remains stronger than relying on core Openclaw security alone because:

- Defense outside the blast radius: If an attacker gains control of Openclaw’s process or config (e.g., via a plugin vulnerability or misconfigured gateway), the AI SAFE² gateway and memory protocol still stand between that compromised agent and the outside world, enforcing schema checks, risk scoring, and circuit breakers that Openclaw cannot override from inside.

- Unified risk model vs. patch-by-patch: Openclaw’s security changes are mostly targeted fixes (SSRF, LFI, env overrides, path traversal, allowlists, etc.), which is necessary but reactive; AI SAFE² instead applies a unified risk lens (0–10 risk scores for actions, 0–100 installation risk) that catches new misconfigurations and combinations of tools that have never been individually patched before.

- Human-in-the-loop as a first-class control: While Openclaw supports operator approvals and safer exec by design, AI SAFE² formalizes “require human approval for high‑risk actions” as a policy enforced by both memory instructions and the gateway, with auditable records of approvals and denials that support compliance reviews.

- Repeatable hardening for every upgrade: After each Openclaw release or redeploy, you can rerun

scanner.pyand compare risk scores, ensuring that new features or configuration changes did not silently reintroduce known dangerous patterns like 0.0.0.0 exposure or unredacted logs. - Compliance and operations focus: Openclaw is primarily an application/agent platform; AI SAFE² is explicitly designed to get you to “compliance-ready deployment,” integrating with SIEM, SSO/MFA, and audit programs, which is the gap that remains even with strong in-product security fixes.

Cybersecurity AI Agent, Agentic AI Concrete example

A typical real-world failure mode is: an engineer upgrades Openclaw, turns on a new channel or plugin, and accidentally exposes the gateway on 0.0.0.0 with weak auth while logs still contain API keys.

In that scenario:

- Openclaw’s own warnings and docs help but depend on the engineer noticing and acting correctly.

- AI SAFE²’s scanner flags the exposure and log issues as high severity, the memory protocol disables unsolicited outbound calls, and the gateway requires strong auth and blocks risky tool use, reducing the chance that a single misstep turns into a full compromise.

If you want, I can take this further and draft an “Openclaw + AI SAFE²” security posture deck or table you can drop into an internal review, with mapped controls to specific CWE/CAPEC items and SOC 2 / ISO 42001 clauses.

Defense Outside the Blast Radius: Why Frameworks Beat Features

FAQ on OpenClaw Security Upgrades Compared Against AI SAFE²

What is Openclaw and why are security researchers worried about it?

Openclaw is an open source ai assistant and open agent platform that runs on your own devices, connecting ai models and ai services to your local network, messaging platforms, and filesystem so it can read and write files, automate workflows, and act as a powerful ai agent for everyday tasks. Because openclaw offers direct access to endpoints, browser automation, SSH, and other high‑impact tools, security researchers warn that ai agents like openclaw dramatically expand the attack surface and can create a security nightmare if misconfigured. In practice, giving openclaw broad permissions without strong access control or a hardened security model can turn a personal ai assistant into a bot that obediently executes malicious instructions from an attacker who sends the right message at the right time.How does the Openclaw gateway work and what role does it play in openclaw security?

The gateway is the front door for openclaw: it manages authentication, connects to messaging platforms such as Whatsapp, Telegram, Discord, and Slack, and forwards each user message to the ai agent while enforcing access control before intelligence. Openclaw’s security model for the gateway is built around “identity first, scope next, model last,” meaning the gateway decides who can interact with the agent, where openclaw agents are allowed to act (tools, groups, devices, filesystem), and only then lets ai models interpret the message. Misconfigured gateway authentication or exposed openclaw instances on the public internet are a significant security risk because they let a malicious attacker send commands to the bot and potentially bypass normal endpoint security controls.What is AI SAFE² and how does it relate to Openclaw?

AI SAFE² is an open-source framework for governing, securing, and auditing agentic AI systems, designed to wrap high‑risk tools like Openclaw with an external safety and compliance layer rather than relying only on in‑app hardening. For Openclaw, AI SAFE² provides patterns and examples (like external gateways, scanners, and policies) that treat the AI agent and its automations as potentially untrusted, limiting blast radius while still letting organizations benefit from powerful ai automation.How does AI SAFE² protect Openclaw from prompt injection and malicious skills?

AI SAFE²’s “Sanitize & Isolate” pillar focuses on filtering secrets and sensitive data before they ever reach LLMs or ai models, and it treats every skill or tool as untrusted code that must be analyzed for injection, exfiltration, or malicious instructions. When applied to Openclaw, this means running skills through static and behavioral inspection, enforcing JSON/schema validation on tool calls, and using runtime anomaly detection so that a prompt injection or malicious skill is caught at the framework layer even if Openclaw itself interprets the message as legitimate.Why do articles call personal ai agents like openclaw a “security nightmare”?

Analysts describe personal ai agents like openclaw, originally known as clawdbot and moltbot, as a security nightmare because they combine always‑on automation, broad tool access, and natural‑language interfaces that make it trivial for a malicious user to trigger dangerous behavior with a single prompt injection attack. When running openclaw directly on a laptop or home server, a compromised configuration can expose sensitive data, browser profiles, SSH access, and write files on the local filesystem, so one convincing prompt injection can have a much larger blast radius than a typical chatbot. For security researchers, the combination of an open-source ai assistant, rapid open source development, and complex openclaw components makes it hard to do a full security audit and increases the chance of unnoticed security issues in real‑world openclaw deployments.How does Openclaw handle prompt injection and other malicious inputs?

Openclaw’s security documentation emphasizes that LLMs and ai models are inherently vulnerable to injection, so the platform adds system prompt guardrails, channel allowlists, and tool‑scoping to limit what a malicious prompt or prompt injection can actually do. The core idea is that most failures are not exotic exploits but “someone messaged the bot and the bot did what they asked,” so openclaw tries to constrain tools, endpoints, and permissions so that even a sophisticated prompt injection attack or sequence of malicious instructions has a bounded blast radius. However, openclaw’s security and openclaw’s system prompt guardrails still rely on correct deployment and configuration; if you over‑grant permissions or connect high‑value endpoints without guardrail policies, openclaw’s security posture can degrade quickly.How does AI SAFE² improve security posture and security audit readiness for Openclaw deployments?

AI SAFE² is built around five pillars (Sanitize & Isolate, Audit & Inventory, Fail‑Safe & Recovery, Engage & Monitor, Evolve & Educate) that collectively turn ad‑hoc checks into a repeatable security model for agent platforms. For Openclaw deployments, AI SAFE² encourages live asset mapping, secret scanning, credential rotation, immutable logging, and automated “kill switches,” which simplifies security audit preparation and gives teams continuous evidence that their ai agent deployment meets security best practices.How does AI SAFE² handle detection and response for agentic AI incidents?

Under its “Engage & Monitor” and “Fail‑Safe & Recovery” pillars, AI SAFE² emphasizes runtime behavioral analytics that watch for unusual API call spikes, unexpected cross‑agent calls, or suspicious data flows that might indicate an attacker abusing an ai agent. Instead of relying only on periodic reviews, AI SAFE² recommends automated playbooks, circuit breakers, and rollback paths so that if an Openclaw instance or other ai agent drifts into unsafe behavior, the framework can quarantine or shut down the workflow quickly while security teams investigate.What are Clawdbot and Moltbot, and how do they relate to today’s Openclaw agents?

Clawdbot and moltbot were earlier names and branding for what is now openclaw, an agent platform that evolved from a single bot into a family of openclaw agents and agentic ai capabilities known as clawdbot and moltbot in media coverage. Modern openclaw agents like the personal ai assistant described in reviews can run as a background bot that monitors directories, responds to chat messages, and triggers automation without the user typing each command, which increases both productivity and cybersecurity risk. Because openclaw runs as an open-source, open agent platform that runs on user devices and links to ai services, the same features that make clawdbot and moltbot powerful also raise security concerns for enterprises trying to control shadow ai and supply chain exposure.Which messaging platforms and endpoints can Openclaw connect to, and what security concerns does that raise?

Openclaw integrates with platforms such as whatsapp, telegram, discord, Signal, Slack, and other messaging platforms, so users can interact with the agent from almost any channel while running openclaw on their own hardware. This flexibility can turn openclaw into a central bot that routes every message, file, and API request through a single gateway, so any vulnerability or misconfiguration in access control, authentication, or endpoint permissions becomes a significant security concern. From a cybersecurity perspective, enterprises that deploy openclaw or similar ai agents like openclaw must treat these messaging integrations like new high‑privilege endpoints and include them in detection and response, firewall policy, and security best practices reviews.What kinds of tools can an Openclaw ai agent use, and why is that risky?

Openclaw connects ai agents to tools like a headless browser, command‑line automation, calendar and email, GitHub, Brave search, and local scripts, turning the bot into an agentic ai system that can read and write files, browse the web, and orchestrate complex workflows. Skills known as clawdbot and moltbot expand this further with integrations that can write files, modify configs, and call external APIs, meaning a malicious prompt or attacker could leverage openclaw directly to change your environment rather than just generate text. Security guidance stresses that you should only give openclaw the minimal permissions it needs, avoid sharing your primary API key or long‑lived credential secrets with the bot, and regularly harden tool configurations so openclaw’s security model limits what automation can do if compromised.Can AI SAFE² help with supply chain, credentials, and api key safety around Openclaw?

Yes, AI SAFE² explicitly calls out secret scanning, credential rotation, and integration with tools like GitHub Advanced Security as core practices, reducing the chance that API keys or credentials leaked by an ai agent can be reused by attackers. For Openclaw and similar open-source agents, AI SAFE² treats repositories, skills, and configs as part of the AI supply chain, encouraging teams to inventory dependencies, scan for hard‑coded secrets, and rotate keys automatically if exposure is suspected.Why is AI SAFE² a better long‑term security model for ai agents like Openclaw than relying on product patches alone?

Existing hardening efforts in tools like Openclaw focus on closing individual vulnerability classes, but AI SAFE² assumes that new behaviors and automations will continually create fresh security issues, so it embeds continuous red‑teaming, education, and monitoring as first‑class requirements. By combining preventive controls (sanitize and isolate data), strong inventory and logging, and automated response, AI SAFE² gives organizations a way to keep powerful ai agents in production while still managing security risk as these systems evolve over time.How should I deploy Openclaw to reduce security risk and protect sensitive data?

To safely deploy openclaw, documentation recommends running openclaw on a trusted endpoint inside your local network, putting the gateway behind a firewall or reverse proxy, and enforcing strong authentication and access control for every user and bot integration. A careful security audit should verify that exposed openclaw ports are closed to the public internet, that only approved users can interact with the agent, and that logs and memory do not leak sensitive data or sensitive information gathered from tools and ai services. Organizations that deploy openclaw or similar agent platform tools in production should treat it as a new high‑value system in their supply chain with its own security model, security posture, and endpoint security monitoring rather than a toy bot running on a developer laptop.What common security issues have been seen in real‑world Openclaw deployments?

Case studies mention users who deploy openclaw on a VPS with the gateway bound to 0.0.0.0, weak or missing authentication, and full filesystem and browser profiles exposed, creating a scenario where any attacker on the internet can send a message and control the bot. Other security issues involve overly broad permissions for tools that can write files, run shell commands, or access SSH, which turns an innocent‑looking command from a friend into a potential vulnerability with serious blast radius if the bot runs it without question. Security guides for openclaw highlight that many of these problems are preventable with security best practices—restricting openclaw components to the local network, limiting automation scope, and regularly reviewing configurations as part of a security audit process.How do Openclaw and ai agents like Openclaw impact enterprise cybersecurity and governance?

Because openclaw is an open-source, open-source ai assistant that employees can run themselves, it contributes to shadow ai: unofficial agentic ai tools operating outside formal corporate policies and outside traditional firewall and endpoint security controls. Enterprises face significant security and compliance questions if staff deploy openclaw or other ai agents like openclaw with access to corporate APIs, internal messaging platforms, and private repositories, especially when security model details and guardrail coverage are poorly understood. Governance programs should classify running openclaw as introducing a new automation and supply chain element, requiring explicit permission, documented security issues tracking, and ongoing detection and response integration just like any other high‑privilege application.What are the top security best practices if I still want to use Openclaw today?

If you choose to deploy openclaw, experts advise starting with a minimal configuration, disabling high‑risk tools, tightening access control at the gateway, and treating the bot as a production system that deserves the same security audit rigor as any critical service. You should only deploy openclaw on hardened hosts, restrict access to trusted messaging platforms, keep openclaw’s dependencies patched, and continuously monitor for malicious behavior or unusual automation to keep openclaw’s security in line with broader cybersecurity goals. Over time, organizations can formalize understanding the security of openclaw by documenting openclaw deployments, mapping openclaw’s security model into their broader access control framework, and ensuring that detection and response teams know how to spot a compromised bot before it causes a major incident.