SlowMist OpenClaw Security Practice Guide & AI Agent Security with AI SAFE²

Two Frameworks Walk Into a Root Shell: SlowMist vs. AI SAFE² for High-Privilege AI Agents A technical deep-dive into the OpenClaw security […]

AI Cyber Defense vs AI Threat Landscape in 2026

Structural Adequacy of AI Cyber Defense Models Against the 2025 Threat Landscape in 2026 EXECUTIVE SUMMARY: THE VERDICT 2025 proved one fundamental […]

OpenClaw Risks: Autonomous AI Agents, Real‑World Abuse, and Hidden Security Failures

AI Agent “OpenClaw” Risks Are Not Misconfigurations — They Are an Engineering Certainty Why autonomous AI agents like OpenClaw, will keep registering […]

AI SAFE² | Secure AI Agent Framework Update v1.0 to v2.0 | Cyber Strategy Institute

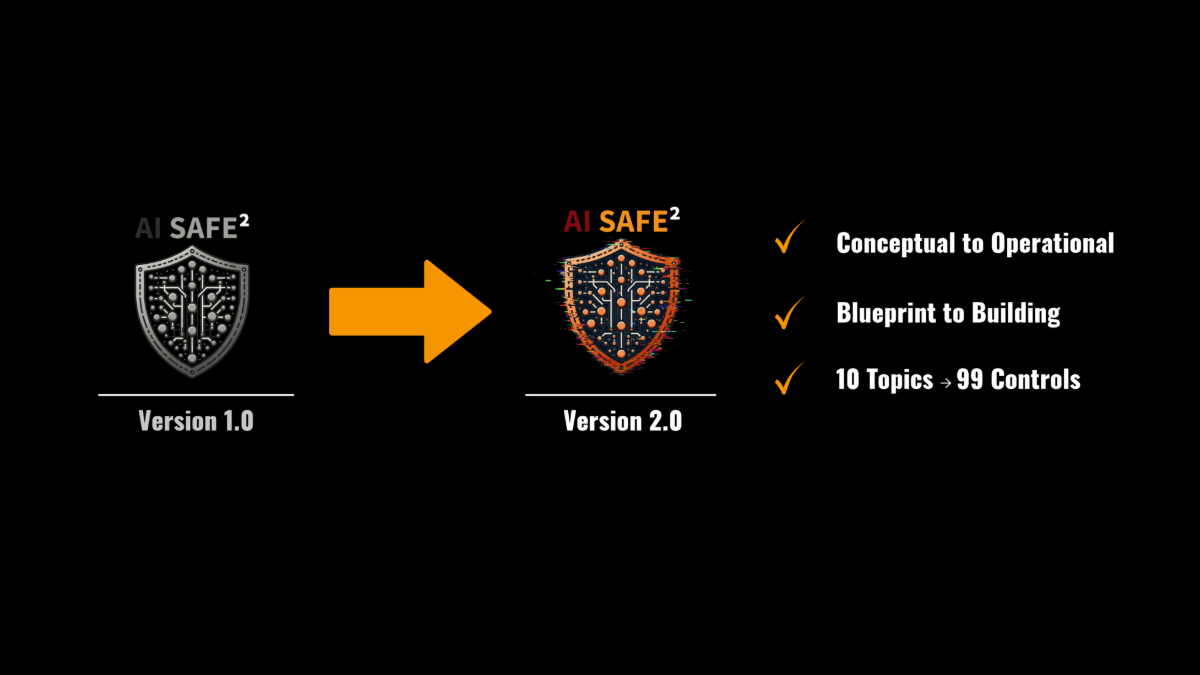

AI SAFE²: From Foundational Blueprint to Agentic Governance Reality AI SAFE² v1.0 was born from a stark and unavoidable reality: AI began […]

Man-in-the-Prompt: The CISO’s Guide to Defeating ChatGPT Prompt Injection Operationalizing the AI SAFE² Framework

The CISO’s Guide to Prompt Injection: Defeating Man-in-the-Prompt AI Risk with a GenAI Security Framework A New AI Vulnerability Demands a New […]