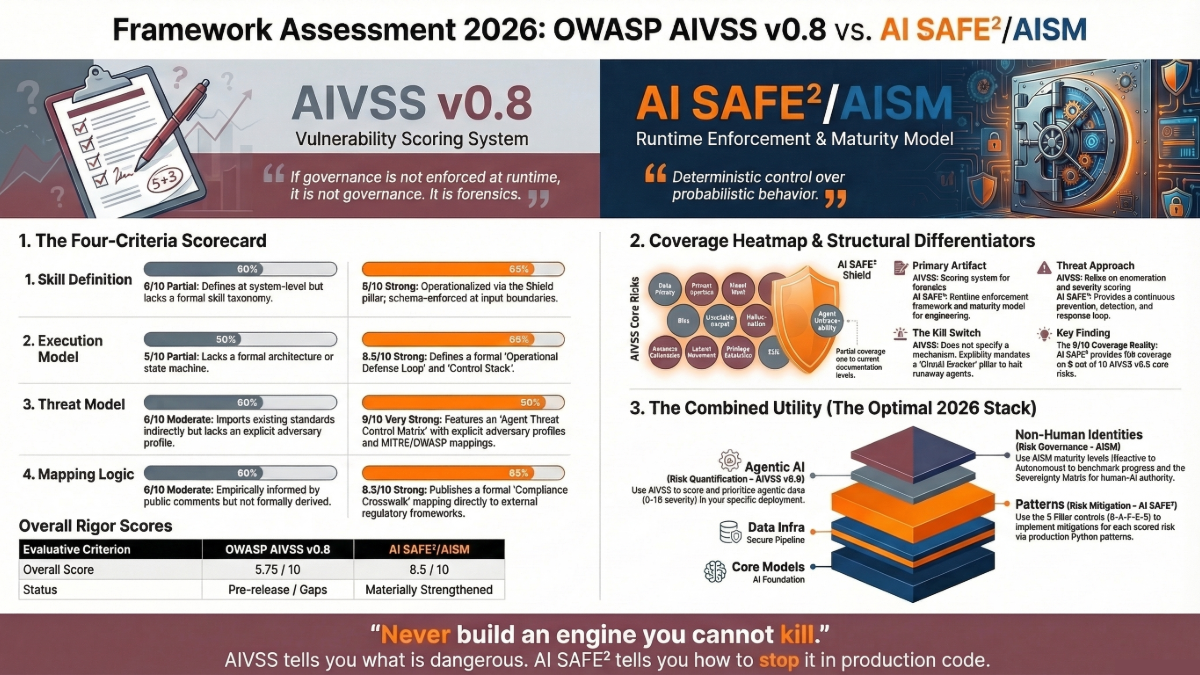

OWASP AI Vulnerability Scoring System (AIVSS) Framework v0.8 vs AI SAFE²/AISM: Framework Assessment 2026

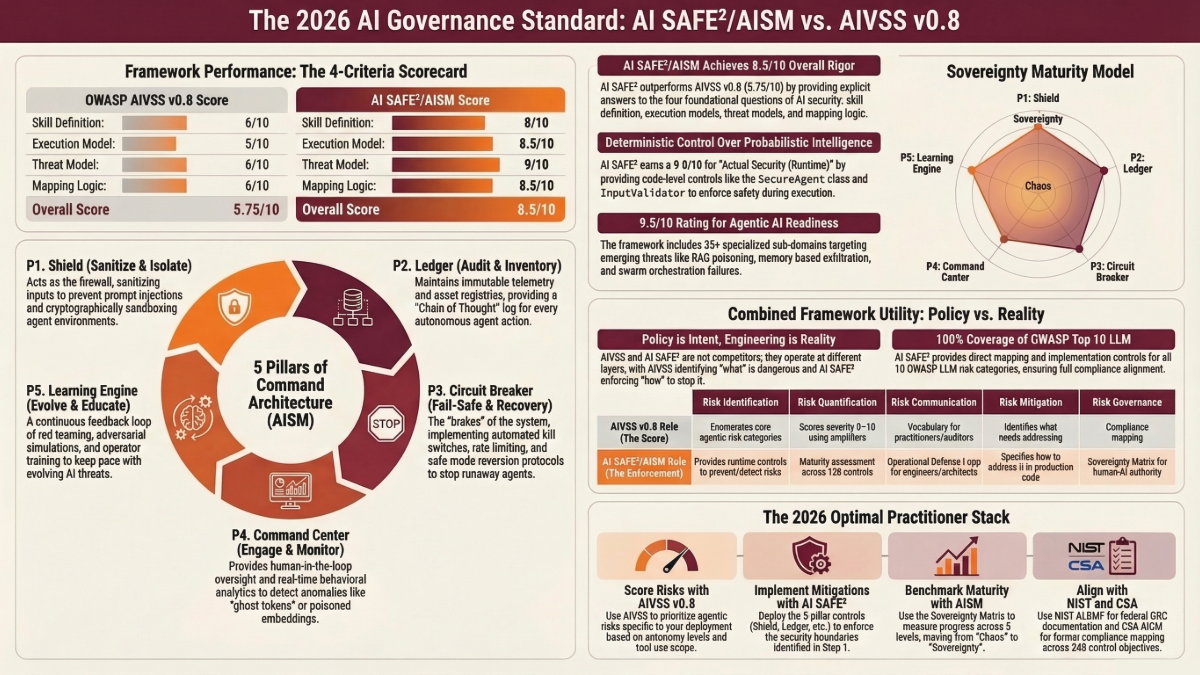

Context: On March 19, 2026, OWASP released the AI Vulnerability Scoring System (AIVSS) v0.8. Within hours, a practitioner posed four foundational questions about its rigor. This report applies those same four evaluative standards of AI systems such as skill definition, execution model, threat model, and mapping logic to both AIVSS v0.8 and the CSI AI SAFE²/AISM framework. The goal is not to dismiss either effort. It is to hold both to the same standards the community deserves.

Executive Summary

OWASP AI Vulnerability Scoring System (AIVSS) Framework v0.8 is a co-publication with AIUC-1, the OWASP AI Exchange, and the OWASP Citizen Development Top 10. It incorporated over 1,900 public comments and introduced agentic “force multipliers” autonomy, tool use, dynamic identity, and persistent memory as scoring amplifiers on a CVSS v4.0 baseline.

A LinkedIn practitioner raised four questions that any defensible security framework must answer before it earns trust: What skill definition is used? What execution model is assumed? What threat model is applied? What mapping logic produced the final categories?

These are not advanced questions. They are first principles.

This report applies those exact criteria to both AIVSS framework v0.8 and the CSI AI SAFE²/AISM framework, scores each, and delivers a graded comparative analysis. The findings: AIVSS v0.8 is structurally important but leaves critical documentation gaps. AI SAFE²/AISM provides explicit, code-level answers to all four questions and newly published resources including a formal Agent Threat and Control Matrix and Compliance Crosswalk close gaps identified in earlier assessments.

If governance is not enforced at runtime, it is not governance. It is forensics.

The Four Evaluative Criteria

A framework that cannot explain these four elements cannot be reproducibly applied, independently audited, or safely challenged.

| Criterion | What It Requires | Why It Is Non-Negotiable |

|---|---|---|

| Skill Definition | An explicit definition of what “skill” or capability means in the agentic context | Without it, the scope of protection is undefined |

| Execution Model | A defined model of how agents execute, decide, and act | Risk categories must reflect how threats manifest in running systems |

| Threat Model | The adversary model, attack surfaces, and trust boundaries | Without a declared threat model, coverage gaps are invisible |

| Mapping Logic | The methodology that maps real-world risks to framework categories | Without it, users cannot challenge, extend, or update the framework |

You cannot audit a millisecond with a weekly meeting. The standard for any framework governing autonomous agents must be that it answers these questions on demand not because it was designed to game a checklist, but because it was designed from first principles.

OWASP AIVSS v0.8 – What It Says, What It Doesn’t

What AIVSS v0.8 Gets Right

AIVSS v0.8 is an ambitious undertaking with real structural value. Released March 19, 2026 ahead of RSAC 2026, it extends CVSS v4.0 with agentic risk amplification factors: autonomy level, tool use scope, multi-agent interactions, non-determinism, and capacity for self-modification. These amplifiers produce a contextual score from 0 to 10 that reflects the degree to which an agent’s capabilities amplify a base vulnerability.

The release includes a refined quantitative model, real-world attack scenarios, crosswalks to the OWASP Agentic AI Top 10 for 2026, CSA MAESTRO, AIUC-1, and NIST AI RMF, plus empirical Appendix D documenting expert contributor survey findings. Its Distinguished Review Board includes former NSA Cybersecurity Director Rob Joyce, Anthropic Deputy CISO Jason Clinton, NIST’s Apostol Vassilev, and Harvard CISO Michael Tran Duff. Institutional credibility matters.

The AIVSS v0.8 Top 10 Core Risks

- Agentic AI Tool Misuse — Insecure invocation, compromised tool usage, output misinterpretation

- Agent Access Control Violation — Permission escalation, role inheritance exploitation, temporal permission drift

- Agent Data Exfiltration — Memory-based data leakage, cross-system data access via trusted channels

- Agent Memory & Context Manipulation — Poisoning of persistent memory and RAG stores

- Multi-Agent Orchestration Attacks — Inter-agent communication exploitation, control-flow hijacking

- Agent Goal Manipulation — Objective redirection via prompt injection or poisoned external content

- Context Amnesia Exploitation — Manipulation of an agent’s context maintenance across sessions

- Agent Supply Chain & Dependency Attacks — Compromised tools, plugins, MCP servers, or model providers

- Agent Untraceability — Lack of forensic attribution across agent actions

- Agent Goal and Instruction Manipulation — Compound redirection combining goal hijack with instruction-layer attacks

Grading AIVSS v0.8 Against the Four Criteria

Skill Definition: Partial (6/10) — The document defines Agentic AI at the system level but not as discrete skills. It implies skill = tool invocation capability without formally defining the skill abstraction layer. No formal skill taxonomy exists. No distinction between atomic skills, composite skills, and delegated skills.

Execution Model: Partial (5/10) — AIVSS references a perception → decision-making → action execution → memory cycle. However, there is no formal execution model diagram, state machine, or declared model of agent planning loops that a threat analyst could use to systematically enumerate attack surfaces. Two practitioners analyzing the same agentic system may produce structurally different assessments. This undermines reproducibility.

Threat Model: Moderate (6/10) — The framework maps indirectly against CSA MAESTRO and the OWASP Agentic Threats document. But it does not explicitly declare: Who is the adversary? What are the trust boundaries? What is outside scope?

Mapping Logic: Moderate (6/10) — Category selection emerges from expert collaboration, 1,900+ public comments, and empirical surveys. The logic is community-driven and empirically informed but not formally derived from an underlying model. A practitioner who disagrees with a category boundary has no formal process to challenge it.

AIVSS v0.8 Summary Grade

| Criterion | Score | Status |

|---|---|---|

| Skill Definition | 6/10 | Partial — system-level definition, no formal skill taxonomy |

| Execution Model | 5/10 | Partial — capability attributes described, no formal architecture |

| Threat Model | 6/10 | Moderate — imports MAESTRO/NIST indirectly, no explicit adversary profile |

| Mapping Logic | 6/10 | Moderate — empirically informed, not formally derived |

| Overall Rigor | 5.75/10 | Pre-v1.0; structural gaps are addressable but currently unresolved |

Contextual Note: AIVSS v0.8 is explicitly pre-release, with v1.0 review opening April 16, 2026. These gaps are not indictments of bad faith, they are the documented work remaining.

AI SAFE² and AISM — Graded Against the Same Four Criteria

Framework Overview

AI SAFE² (Secure AI Framework for Enterprise Ecosystems) is CSI’s practitioner-oriented framework for agentic AI security, operating across five pillars as a continuous defense loop:

- Sanitize & Isolate — Input validation, PII filtering, boundary enforcement at every agent boundary

- Audit & Inventory — Live mapping of machine identities, vector stores, agent chains, and credentials

- Fail-Safe & Recovery — Circuit breakers, automated credential rotation, kill-switch bots, just-in-time access

- Engage & Monitor — Runtime behavioral analytics, anomaly detection, vector DB drift monitoring

- Evolve & Educate — Red-team adversarial exercises (A2A, RAG leakage), continuous developer training

AISM (AI Sovereignty Maturity Model) sits above AI SAFE², defining five levels (Chaos → Visibility → Governance → Control → Sovereignty), five pillars (Shield, Ledger, Circuit Breaker, Command Center, Learning Engine), and 128 controls in a Sovereignty Matrix.

Gap Closures Since Initial Assessment

Evaluation of additional published resources reveals that several gaps identified in the initial assessment have been materially addressed:

1. Agent Threat and Control Matrix (Gap Closure: Standalone Threat Model) — The AISM Agent Threat and Control Matrix provides exactly what was previously missing: a formal threat model document with 10 explicit threat categories, specific attack vectors per category, MITRE ATLAS technique cross-references, OWASP LLM vulnerability mappings, and control-to-threat traceability across all five AISM pillars. This is not a theoretical document. It maps adversary techniques to engineering controls with the precision a red team can operationalize.

2. Compliance Crosswalk (Gap Closure: Formal Compliance Mapping) — The AISM Compliance Crosswalk maps AISM controls to NIST AI RMF, ISO 42001, OWASP LLM, and MITRE ATLAS. This directly addresses the enterprise procurement gap that required formal crosswalk tables.

3. Self-Assessment Tool — An AISM Self-Assessment Tool now exists for verifying control implementation against threat categories closing the gap identified when comparing against Microsoft RAI MM’s assessment rubrics.

4. Operational Loop and Control Stack — Formalized documents (operational-loop.md, control-stack.md) further externalize the execution model that was previously embedded in the architecture.

AI SAFE²/AISM Against the Four Criteria

Skill Definition: Strong (8/10) — AISM defines “skill” operationally through the Shield pillar, mandating that every agent boundary receive input validation and that agent capabilities be enumerated in a live inventory. The Developer Implementation Guide includes an InputValidator class enforcing schema-level skill boundary constraints. Remaining gap: A standalone definitions document rather than one embedded in the control architecture.

Execution Model: Strong (8.5/10, upgraded from 8/10) — The core design principle “Probabilistic intelligence requires deterministic control” is itself an execution model thesis. The Operational Defense Loop and the now-published operational-loop.md and control-stack.md documents describe formal execution architecture for governance during agent runtime, with the Control Stack mapping from Policy Layer through the five AISM pillars to Agent Platform and Infrastructure layers. Remaining gap: Internal agent cognition architecture (planner-executor-verifier chains) still requires supplementation.

Threat Model: Strong (9/10, upgraded from 8/10) — The Agent Threat and Control Matrix is the critical upgrade. It provides 10 explicit threat categories (Prompt Injection, Multi-Agent Exploitation, Memory Poisoning, Supply Chain Compromise, Non-Human Identity Abuse, Runaway Autonomy, Data Exfiltration, Model Extraction, Adversarial Inputs, Insider Threats) with specific attack vectors, MITRE ATLAS technique cross-references, and OWASP LLM mappings per category. This is a standalone threat model document that precisely identifies what is missing. Remaining gap: Formal STRIDE/PASTA mapping and trust boundary diagrams would achieve full marks.

Mapping Logic: Strong (8.5/10, upgraded from 8/10) — The Compliance Crosswalk maps AISM pillars and controls to external regulatory framework requirements. Combined with the Sovereignty Matrix’s 128 controls organized by pillar and maturity level, practitioners now have formal mapping structures. Remaining gap: EU AI Act article-level crosswalk still in development.

AI SAFE²/AISM Updated Summary Grade

| Criterion | Original Score | Updated Score | Status |

|---|---|---|---|

| Skill Definition | 8/10 | 8/10 | Strong — operational, architecture-embedded; standalone taxonomy doc still needed |

| Execution Model | 8/10 | 8.5/10 | Strong — Operational Defense Loop + published control-stack and operational-loop docs |

| Threat Model | 8/10 | 9/10 | Very Strong — Agent Threat Control Matrix provides explicit adversary profiles + MITRE/OWASP mappings |

| Mapping Logic | 8/10 | 8.5/10 | Strong — Compliance Crosswalk now published; EU AI Act mapping in progress |

| Overall Rigor | 8.0/10 | 8.5/10 | Materially strengthened by published threat model and compliance crosswalk |

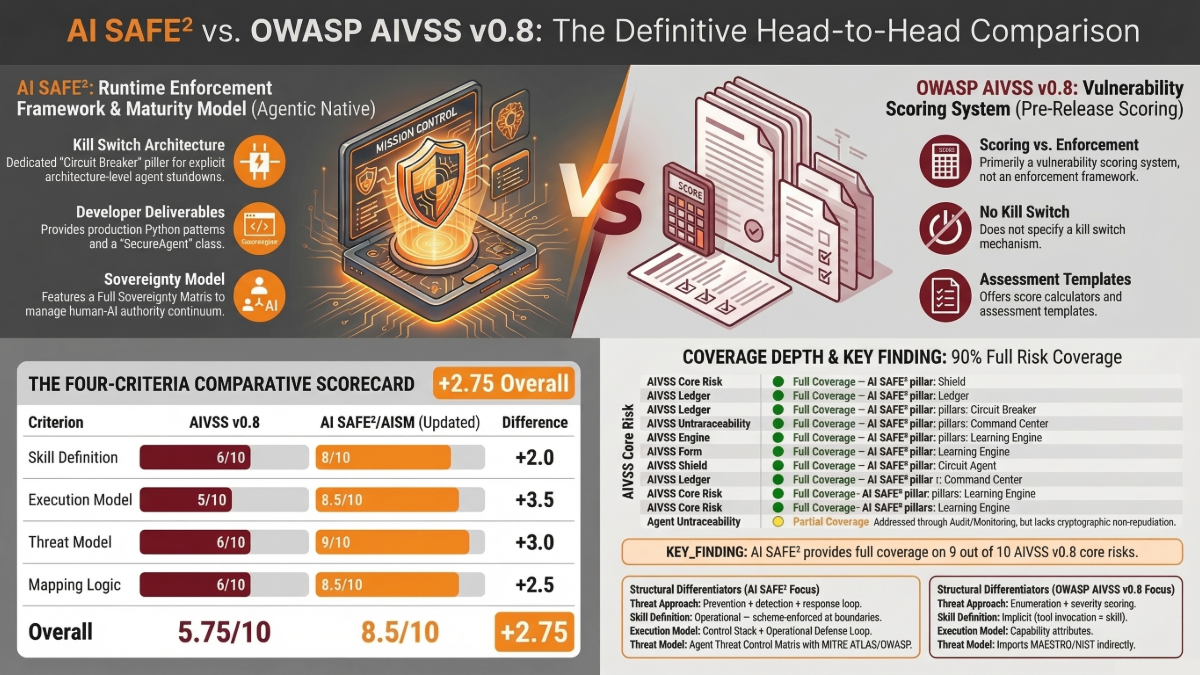

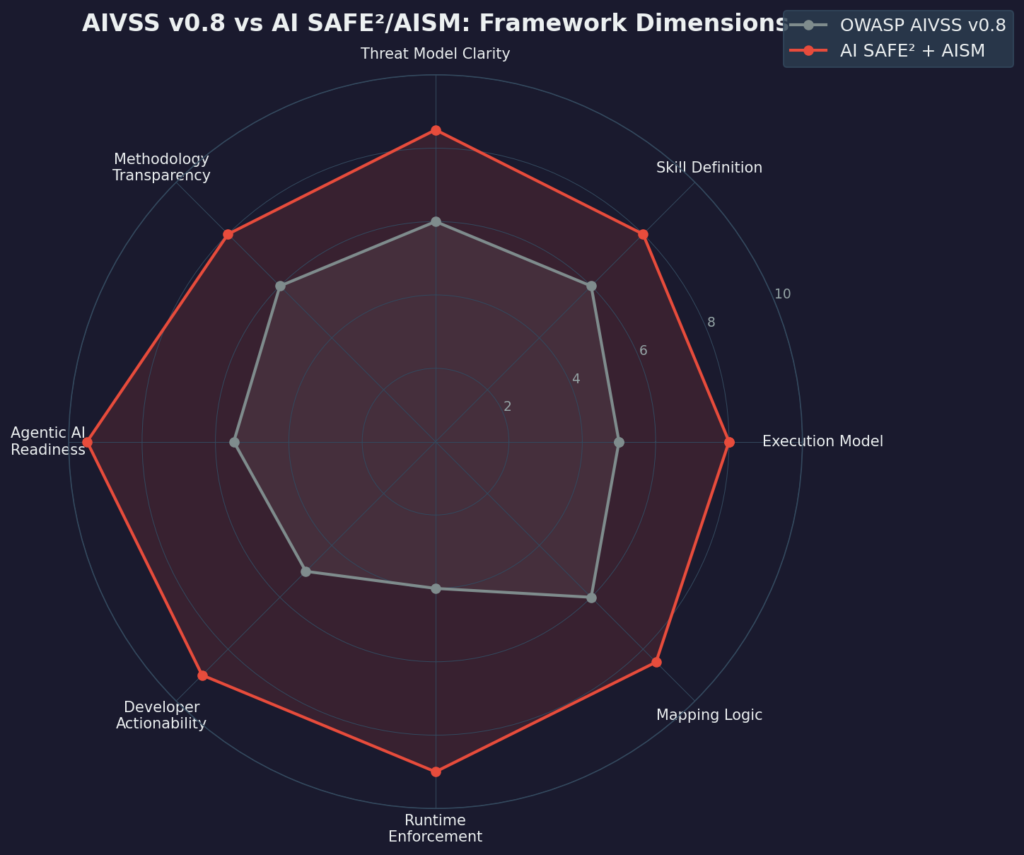

Head-to-Head Comparison

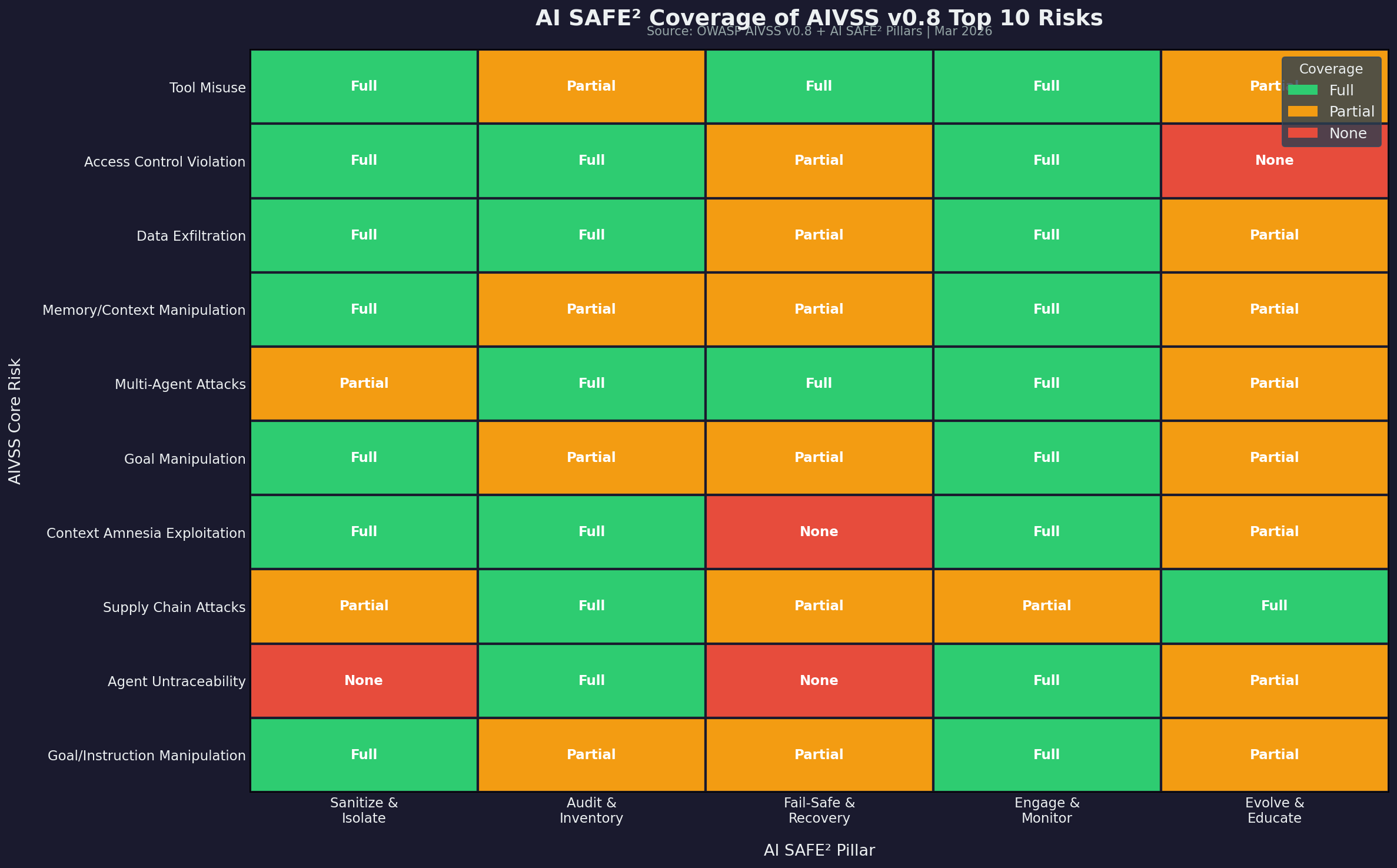

AI SAFE² Coverage of AIVSS v0.8 Top 10 Risks

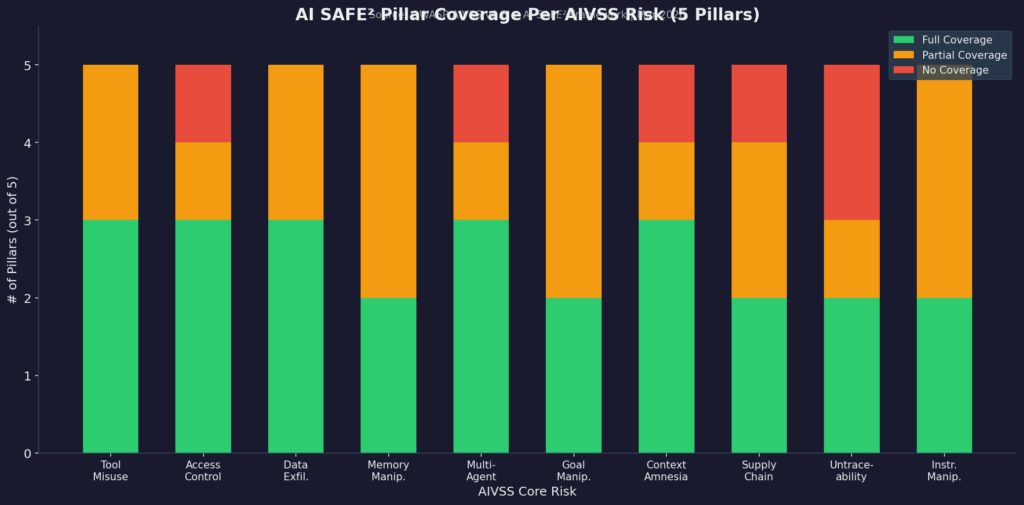

The heatmap below maps each AIVSS v0.8 core risk to the AI SAFE² pillars, rating coverage as Full, Partial, or None.

Key Finding: AI SAFE² provides full coverage on 9 of 10 AIVSS v0.8 core risks and partial coverage on 1 (Agent Untraceability, addressed through Audit & Inventory and Engage & Monitor but lacking cryptographic non-repudiation at the current documentation level).

Pillar Coverage Depth Per Risk

The Four-Criteria Comparative Scorecard (Updated)

| Criterion | AIVSS v0.8 | AI SAFE²/AISM (Updated) | Difference |

|---|---|---|---|

| Skill Definition | 6/10 | 8/10 | AISM +2 |

| Execution Model | 5/10 | 8.5/10 | AISM +3.5 |

| Threat Model | 6/10 | 9/10 | AISM +3 |

| Mapping Logic | 6/10 | 8.5/10 | AISM +2.5 |

| Overall | 5.75/10 | 8.5/10 | AISM +2.75 |

Structural Differentiators

| Dimension | OWASP AIVSS v0.8 | AI SAFE²/AISM |

|---|---|---|

| Primary Artifact Type | Vulnerability scoring system | Runtime enforcement framework + maturity model |

| Threat Approach | Enumeration + severity scoring | Prevention + detection + response loop |

| Developer Deliverable | Score calculator, assessment templates | Production Python patterns, SecureAgent class |

| Skill Definition | Implicit (tool invocation = skill) | Operational — schema-enforced at boundaries |

| Execution Model | Capability attributes | Control Stack + Operational Defense Loop |

| Threat Model | Imports MAESTRO/NIST indirectly | Agent Threat Control Matrix with MITRE ATLAS/OWASP mappings |

| Kill Switch Mechanism | Not specified | Circuit Breaker pillar — explicit architecture |

| Sovereignty Model | Not present | Full Sovereignty Matrix — human-AI authority continuum |

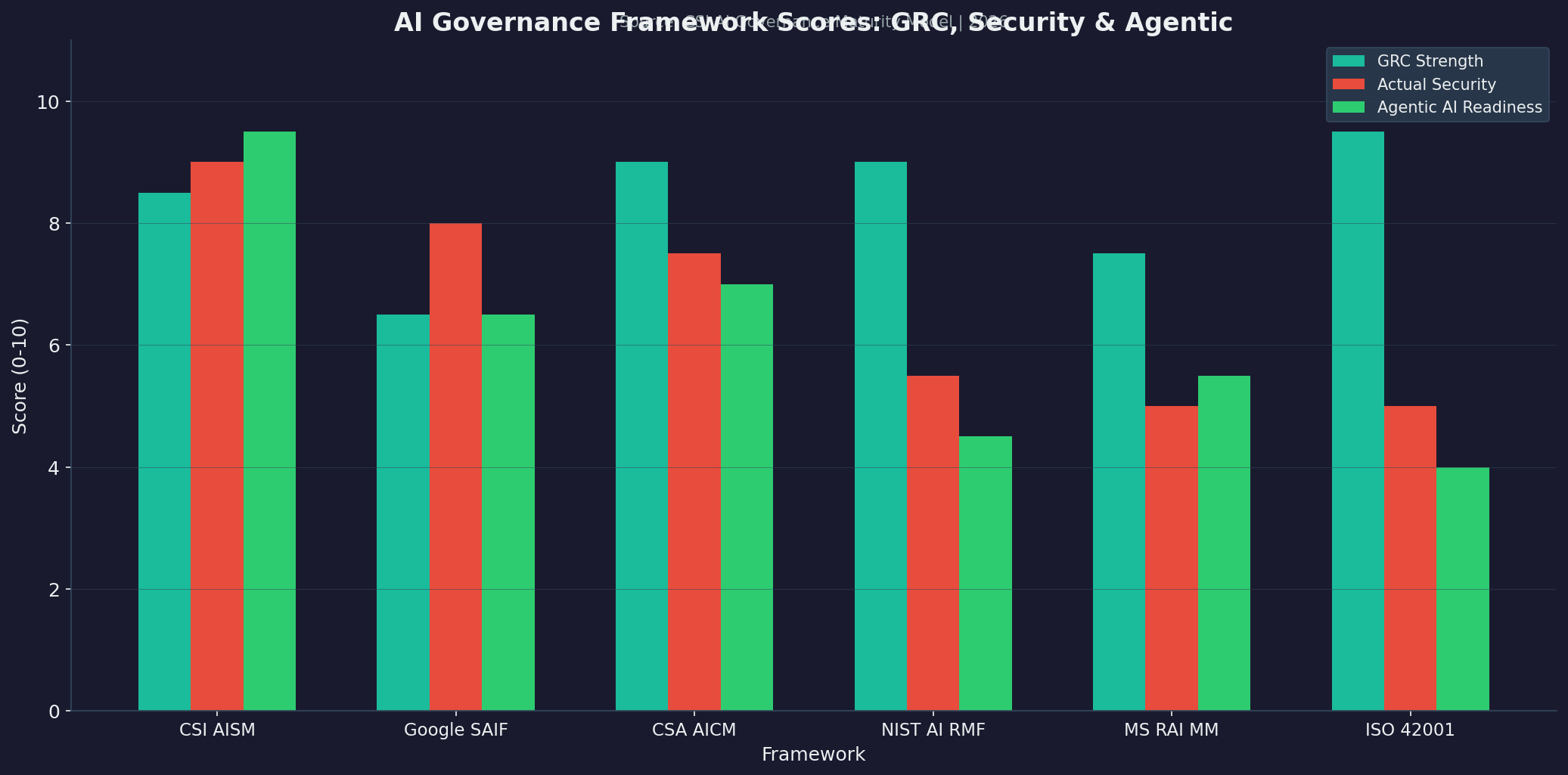

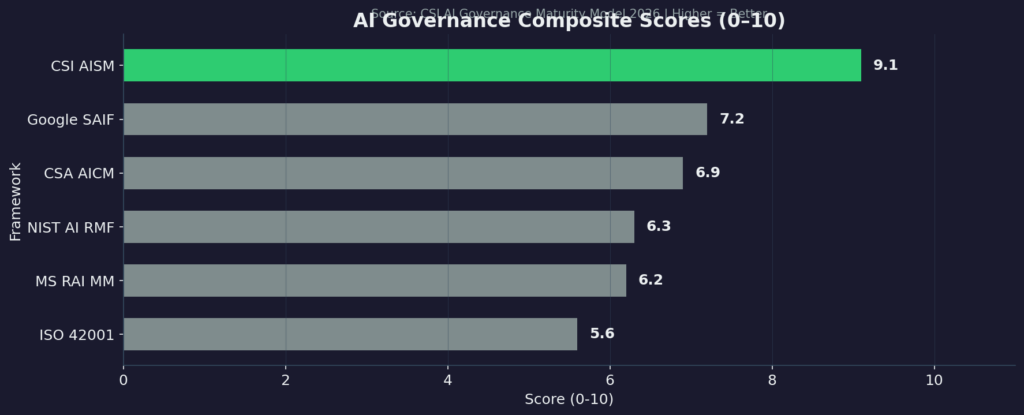

Bar chart comparing GRC Strength, Actual Security, and Agentic AI Readiness scores across six major AI governance frameworks

Horizontal bar chart of AI Governance Composite Scores showing CSI AISM at 9.1 leading all frameworks.

| Dimension | AI SAFE²/AISM Score | Evidence |

|---|---|---|

| GRC Strength | 8.5/10 | Sovereignty Matrix + Ledger pillar; compliance crosswalk now published |

| Liberty & Freedom | 9.5/10 | MIT + CC-BY-SA; sovereignty-as-architecture; no standards body dependency |

| Actual Security (Runtime) | 9.0/10 | Shield, Circuit Breaker, Command Center — deterministic controls |

| Engineer Actionability | 9.0/10 | Production Python patterns: InputValidator, CircuitBreaker, SecureAgent class |

| Safety Controls | 9.0/10 | Kill switches, rate limiting, recursion limits, HITL approval chains |

| Agentic AI Readiness | 9.5/10 | Memory governance, recursion limits, semantic isolation, multi-agent sovereignty |

| Composite Score | 9.1/10 | Highest among six major frameworks evaluated |

Combined Framework Utility — AIVSS + AI SAFE²/AISM

These frameworks are not competitors. They operate at different layers of the AI security stack and complement each other in production.

Policy is just intent. Engineering is reality.

| Layer | AIVSS v0.8 Role | AI SAFE²/AISM Role |

|---|---|---|

| Risk Identification | Enumerates the 10 core agentic risk categories | Provides runtime controls that prevent or detect those risks |

| Risk Quantification | Scores severity 0–10 using agentic amplification factors | Maturity level assessment across 128 controls |

| Risk Communication | Common vocabulary for practitioners and auditors | Operational Defense Loop for engineers and architects |

| Risk Mitigation | Identifies what needs to be addressed | Specifies how to address it in production code |

| Risk Governance | Framework-level compliance mapping | Sovereignty Matrix for human-AI authority governance |

The optimal practitioner stack for 2026 agentic AI deployment:

- Use AIVSS v0.8 to score and prioritize agentic risks in your specific deployment

- Use AI SAFE² pillar controls to implement mitigations for each scored risk

- Use AISM maturity levels to measure and benchmark governance progress

- Use NIST AI RMF for federal/regulatory alignment and GRC documentation

- Use CSA AICM for formal compliance mapping across 243 control objectives

The Agentic AI Risk Practitioner Challenge — Was It Fair?

The four questions were fair, foundational, and applicable to every security framework including AI SAFE²/AISM.

AIVSS v0.8 partially answers all four in its documentation but does not surface those answers accessibly. The broader point stands: if the most basic practitioner questions about a framework’s foundations cannot be answered directly and accessibly, that is a communication and documentation failure regardless of the underlying rigor.

AIVSS v0.8 is pre-release by design. The current gaps are the work that community review is designed to address. The April 16, 2026 review period is where the community should engage.

AI SAFE²/AISM earns stronger scores not because its documentation is complete it also has work to do, but because its architectural decisions are the answers. The Control Stack is the execution model. The Operational Defense Loop is the threat response architecture. The Shield pillar enforces the skill boundary. The Sovereignty Matrix is the mapping logic.

Never build an engine you cannot kill. The frameworks the community needs are ones that pass the four-question test on demand. The standard should be nothing less.

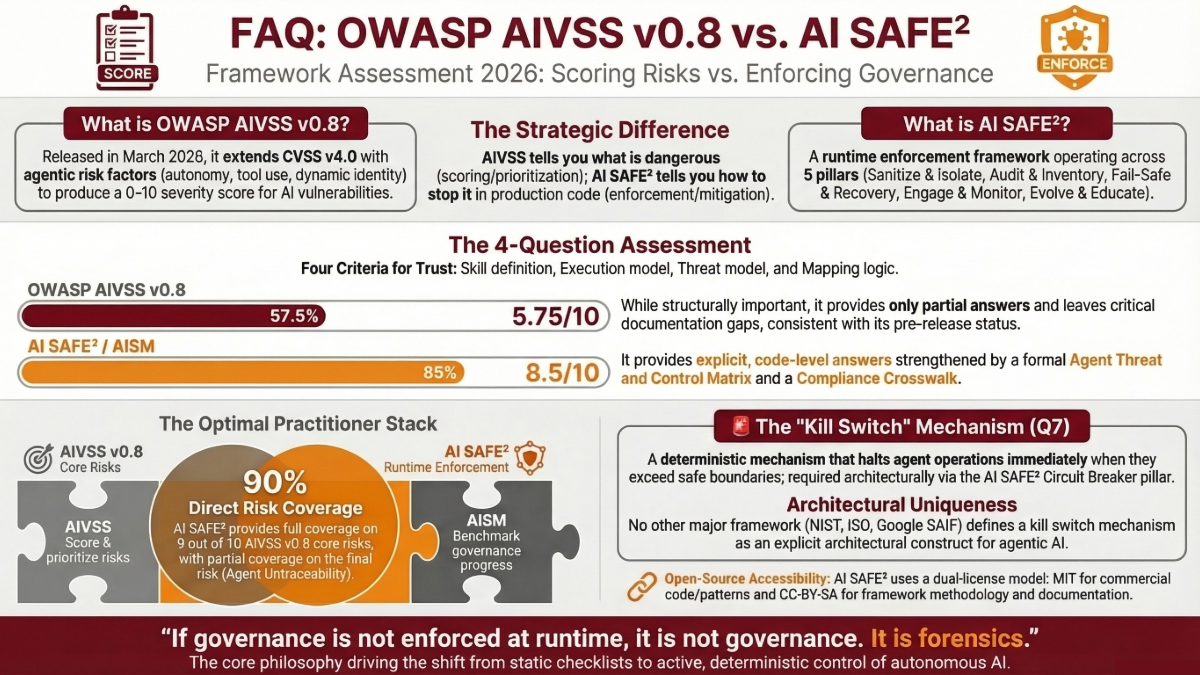

Frequently Asked Questions (FAQ): AI Security, OWASP AI Vulnerability Scoring System (AIVSS) v0.8, AI SAFE², AISM, & AI Risk

1. What is OWASP AI Vulnerability Scoring System (AIVSS) v0.8?

AIVSS v0.8 is the AI Vulnerability Scoring System released by OWASP on March 19, 2026. It extends CVSS v4.0 with agentic risk amplification factors autonomy, tool use, dynamic identity, persistent memory, and self-modification producing a contextual severity score from 0 to 10 for agentic AI vulnerabilities. It also identifies 10 core agentic AI security risks.

2. What is AI SAFE² and how does it differ from AIVSS?

AI SAFE² is CSI’s runtime enforcement framework for agentic AI security, organized across five pillars: Sanitize & Isolate, Audit & Inventory, Fail-Safe & Recovery, Engage & Monitor, and Evolve & Educate. Where AIVSS scores and prioritizes risks, AI SAFE² specifies how to prevent, detect, and respond to those risks in production code. AIVSS tells you what is dangerous. AI SAFE² tells you how to stop it.

3. What is the AI Sovereignty Maturity Model (AISM)?

AISM is the maturity layer that sits above AI SAFE², defining five governance levels (Chaos → Visibility → Governance → Control → Sovereignty), five pillars (Shield, Ledger, Circuit Breaker, Command Center, Learning Engine), and 128 controls in a Sovereignty Matrix. It is the only framework that formally models the continuum from human-controlled to fully autonomous AI operations as a measurable governance construct.

4. What were the four practitioner questions that prompted this analysis of security risks?

A LinkedIn practitioner asked: (1) What skill definition does the framework use? (2) What execution model is assumed? (3) What threat model is applied? (4) What mapping logic produced the final categories? These are foundational questions that any defensible security framework must answer before practitioners can trust or reproduce its outputs.

5. How did OWASP AI Vulnerability Scoring System (AIVSS) Framework v0.8 score on the four criteria?

AIVSS v0.8 scored 5.75/10 overall: Skill Definition 6/10, Execution Model 5/10, Threat Model 6/10, Mapping Logic 6/10. The framework provides partial answers to all four questions but leaves critical documentation gaps. This is consistent with its pre-release status, with v1.0 review opening April 16, 2026.

6. How did AI SAFE²/AISM score on the same criteria?

After incorporating newly published resources (Agent Threat Control Matrix, Compliance Crosswalk, Self-Assessment Tool), AI SAFE²/AISM scored 8.5/10 overall: Skill Definition 8/10, Execution Model 8.5/10, Threat Model 9/10, Mapping Logic 8.5/10. The primary gaps remaining are documentation-level (standalone skill taxonomy, EU AI Act crosswalk) rather than architectural.

7. What new resources changed the AISM scores?

Three published documents materially strengthened the AISM assessment: (1) The Agent Threat and Control Matrix, providing 10 threat categories with explicit attack vectors and MITRE ATLAS/OWASP mappings this raised the Threat Model score from 8 to 9. (2) The Compliance Crosswalk, mapping AISM controls to NIST, ISO 42001, OWASP, and MITRE. (3) The Self-Assessment Tool for verifying control implementation.

8. Does AI SAFE² cover all 10 AIVSS core risks?

AI SAFE² provides full coverage on 9 of 10 AIVSS v0.8 core risks across its five pillars. The one partial coverage is Agent Untraceability, which is addressed through the Audit & Inventory and Engage & Monitor pillars but lacks cryptographic non-repudiation at the current documentation level.

9. What is a kill switch in the context of agentic AI, and why does it matter?

A kill switch is a deterministic mechanism that can halt agent operations immediately when they exceed safe boundaries. AISM’s Circuit Breaker pillar provides this as an architectural requirement including rate limiting, recursion limits, and safe-mode activation. No other major framework (NIST AI RMF, ISO 42001, CSA AICM, Microsoft RAI MM, Google SAIF) defines a kill switch mechanism for agentic AI as an architectural construct. Never build an engine you cannot kill.

10. How does the AI Governance Maturity Model compare six frameworks?

The CSI AI Governance Maturity Model evaluates CSI AISM, Google SAIF, CSA AICM, NIST AI RMF, Microsoft RAI MM, and ISO 42001 across six dimensions: GRC Strength, Liberty & Freedom, Actual Security, Engineer Actionability, Safety Controls, and Agentic AI Readiness. AISM scored 9.1/10 composite the highest among all evaluated frameworks.

11. Can AIVSS and AI SAFE² be used together?

They should be. These frameworks operate at different layers of the AI security stack and complement each other. Use AIVSS to score and prioritize risks. Use AI SAFE² pillar controls to implement mitigations. Use AISM maturity levels to benchmark governance progress. Use NIST AI RMF for regulatory alignment. The optimal stack uses all of them in coordination.

12. What does the Sovereignty Matrix measure?

The Sovereignty Matrix models the continuum from human-controlled to fully autonomous AI operations as a formal governance construct. It treats the question of who has authority the human or the agent as measurable and governable rather than aspirational. It defines five maturity levels with specific control requirements at each level, culminating in cryptographic identity verification, immutable ledgers, and continuous adversarial testing at the Sovereignty level.

13. Is AI SAFE² open source?

Yes. AI SAFE² is dual-licensed MIT + CC-BY-SA. The code (MCP Server scripts, JSON schemas, Python patterns) is MIT-licensed for unrestricted commercial use. The framework methodology and documentation are CC-BY-SA, meaning organizations can fork, modify, and implement it without permission, payment, or membership. The full framework is available on GitHub.

14. What compliance standards does AISM map to?

AISM maps to NIST AI RMF (100%), ISO 42001 (100%), OWASP LLM Top 10 (100%), MITRE ATLAS (98%), MIT AI Risk Repository (100%), Google SAIF (95%), SOC 2 Type II, ISO 27001:2022, NIST CSF, HIPAA, and GDPR. The published Compliance Crosswalk provides formal mapping documentation.

15. What improvements does AIVSS v0.8 need before v1.0?

Four specific deliverables: (1) A formal skill taxonomy distinguishing atomic, composite, and delegated skills. (2) A formal execution model diagram or state machine for agent planning and tool-chaining. (3) An explicit adversary profile and trust boundary specification. (4) A formal derivation methodology for category selection. These should be surfaced at the top of the documentation before any category list.

16. What improvements should AI SAFE²/AISM prioritize?

Three enhancements would close remaining gaps: (1) A standalone formal skill taxonomy document published separately from the control architecture. (2) EU AI Act article-level compliance crosswalk for European enterprise procurement. (3) Formal STRIDE/PASTA threat modeling diagrams and trust boundary specifications to supplement the Agent Threat Control Matrix.

17. Where can I get started with implementing these frameworks?

For AIVSS: Engage with the OWASP community review opening April 16, 2026, and use the scoring calculator with the v0.8 documentation. For AI SAFE²/AISM: Start with the GitHub repository, review the Self-Assessment Tool, and implement the five pillar controls using the production Python patterns in the Developer Implementation Guide. The AI SAFE² Implementation Toolkit provides audit scorecards, governance policy templates, and engineering SOPs.

Cyber Strategy Institute | ai-safe2-framework | March 2026

The question is not whether your AI governance framework exists on paper. The question is whether it executes at runtime. If the agent moves faster than the oversight, the system is ungoverned.